3. Opportunities and risks of AI

Overview

•This chapter explores opportunities and risks of AI for Victoria’s courts and tribunals.

•AI offers opportunities to increase access to justice and improve court efficiency and innovation.

•Different types of AI used within court and tribunal settings carry different types and scales of risk. Risk also varies depending on who is using AI and in what context.

•New opportunities and risks will emerge as AI evolves. Over time, AI will be applied to more diverse tasks which may increase the risk of unintended consequences. Understanding the AI lifecycle and AI’s potential risks is fundamental to using it safely.

•Given the pace of technological change, a significant risk for Victoria’s courts and tribunals is the risk of not acting.

Opportunities of AI for courts and tribunals

3.1Our consultation paper identified potential opportunities for using AI in courts and tribunals. Below we discuss stakeholder views on opportunities, building on examples discussed in Chapter 2. While some opportunities were based on existing pilots, some were hypothetical or based on AI uses in other court jurisdictions.

3.2In submissions and consultations (see Table 1), we heard about a range of opportunities relating to:

•access to justice

•improved court efficiency and innovation.

Table 1: Identified opportunities for AI use in courts and tribunals

|

Access to justice |

Improved court efficiency and innovation |

|

•providing timely access to court information for court users •responding to common inquiries •directing court users around court facilities •reducing court processing times •facilitating online hearings •increasing access for people in remote and rural settings •helping people navigate the justice system •simplifying legal information and providing information in accessible plain English formats •improving user experiences of the jury system •providing automated support to court users, through prepopulating forms, compliance alerts or automated messages •providing alternative solutions to disputes.[1] |

•automating administrative tasks, such as document processing, court scheduling and case triaging •improving scheduling and rostering •enhancing case management systems for court records, lists and documents •improving electronic filing and extraction of data from filed documents •providing timely access to transcripts and audio recordings •assisting in the review of case data •providing more effective data reporting and data analytics •generating case summaries and chronologies •improving search functionalities to identify relevant documents and materials •assisting in the analysis and review of documents •assisting in drafting and proofing documents such as emails and file notes •conducting legal research •reducing vicarious trauma for staff.[2] |

3.3The use of AI in a safe way is an important precondition for realising opportunities. The Coroners Court said:

The Coroners Court is optimistic about the opportunities that AI presents to assist the Court in achieving its objectives and purposes. If managed safely and responsibly, AI tools have the potential to greatly enhance the administration of justice and improve outcomes for families and the wider community.[3]

Enhancing access to justice

3.4AI offers significant opportunities for increasing access to justice for court and tribunal users.[4] There are significant opportunities to enhance access to justice if AI tools are safely implemented (see Table 1).[5]

3.5As the Federation of Community Legal Centres and Justice Connect highlighted:

With rising unmet legal need and millions of people missing out on the legal help they need each year, the benefits of ethically adopting and using AI tools to improve access to justice for people who interact with Victoria’s legal system, courts and tribunals far outweighs any potential risks.[6]

3.6Courts could implement AI to improve participation in proceedings. There may be opportunities to use AI tools to translate hearings and information into other languages, including sign language.[7] However, we were also cautioned about possible accuracy risks, as discussed later at paragraph [3.54]. We were frequently told that AI may be able to help self-represented litigants by providing access to advice and assistance in preparing well-structured court documents.[8] A recent Victorian study noted at least 78 per cent of respondents with legal needs did not receive necessary assistance, indicating a substantial gap in legal service provision.[9]

3.7Using AI for online dispute resolution as part of court processes could also potentially improve access to justice by:

•reducing the cost of resolving matters for litigants through lower legal expenses and court fees

•decreasing the time taken to resolve disputes, noting significant caseloads and backlogs in courts and tribunals

•simplifying the justice system with clearer pathways to resolve disputes

•giving court users more choice and autonomy in how they resolve their disputes

•increasing access for people in remote or rural areas with virtual court services, such as through translation services that are accessible remotely.[10]

Improving court efficiency and innovation

3.8If implemented safely and responsibly, AI may assist courts and lawyers to provide services and perform functions efficiently and effectively (see Table 1).[11] As we consider in Chapter 9, safe design depends on consultation with affected court users.

3.9We heard that court operations could benefit from greater productivity and efficiency by automating some administrative or repetitive tasks.[12] The use of AI could also support court and tribunal functions. However, outcomes will only be improved if the use of AI is consistent with judicial values and principles. The Special Rapporteur on the independence of judges and lawyers stated any decision to use or implement AI tools in a judicial system should be made by judges.[13]

3.10The introduction of AI tools may in some instances lead to improved outcomes for court employees. The Coroners Court highlighted that AI could help to reduce vicarious trauma for staff by reducing exposure to large volumes of distressing images.[14] The Juries Commissioner highlighted that the use of AI may also help minimise the impact of vicarious trauma on jurors.[15]

3.11Opportunities to improve court efficiency should not come at the cost of quality of outcomes for court users. The Australian Human Rights Commission advised that ongoing monitoring and periodic review should occur after an AI system has been deployed. This should include regular testing for human rights compliance.[16] To realise efficiencies of an AI tool, courts should assess potential benefits and use data to measure if these are achieved.[17] However, the Productivity Commission has observed that productivity gains resulting from investment in AI may be challenging to measure.[18]

3.12There are opportunities for the use of AI by legal practices, which may have an indirect benefit on court efficiency.[19] As stated by representatives of the Office of Public Prosecutions:

The use of AI technology has the potential to lead to enhanced productivity and efficiency across legal practice. It is anticipated that the effective use of AI will help cut through the administrative burden on staff and enable solicitors to spend more time engaging in in-depth legal analysis and decision making.[20]

3.13More generally, AI-enabled technologies represent an opportunity for Victoria’s courts and tribunals to consider how the court system can operate in innovative ways. Representatives of the VLSB+C commented on those involved in the justice system who would prefer to hold onto traditional court practices:

We need to be more sophisticated than that because we will end up replicating current processes rather than thinking about how the justice system can work more effectively for more people.[21]

3.14The UN’s Special Rapporteur on the independence of judges and lawyers considered:

States should identify, from the perspective of those experiencing justice problems, data-driven goals for the advancement of human rights. In some circumstances, AI may offer a path to achieving such goals. However, AI should not be adopted without careful assessment of its potential harms, whether these can be eliminated, and whether there are other solutions that are less risky and have fewer negative climate impacts.[22]

3.15If courts are to harness the future opportunities of AI, it will require planning and vision. Examples from England and Wales, Singapore, and New Zealand indicate how technological innovation can be guided by coordinated planning. This includes:

•The AI Action Plan for Justice, released in 2025 by the UK Ministry of Justice, which seeks to coordinate and drive the responsible adoption of AI across the justice system.[23] Prior to this, the England and Wales’ HM Courts and Tribunal Service Reform Programme sought to transform court use of technology through digital solutions that included a single case management system for all criminal courts, and the installation of video technology in 70 per cent of all courtrooms.[24]

•The Courts of the Future Taskforce established by the Supreme Court of Singapore in 2016, which led to the development of a five-year blueprint and the implementation of initiatives relating to online disputes, virtual hearings and AI.[25]

•The Digital Strategy for Courts and Tribunals introduced by the Chief Justice of New Zealand, which has a governance framework involving periodic review by the Heads of Bench.[26]

3.16Adequate funding and planning will be required to effectively implement AI technologies.[27] As the Supreme Court noted:

The Court is currently operating in a resource constrained environment. While this encourages consideration of the use of new technologies to produce efficiencies, it also severely limits the Court’s ability to investigate, acquire, and implement new technologies.[28]

Risks of AI for courts and tribunals

3.17In consultations and submissions, we heard about possible risks of using AI in Victoria’s courts and tribunals. These include risks identified in our consultation paper:

•breaches of information security and data privacy through disclosure of personal and sensitive information by individuals or courts

•lack of explainability and the opacity of AI technology

•bias when the use of an AI tool does not demonstrate fairness, such as with data (algorithmic) and systems (reinforcing) bias

•inaccuracy, hallucinations, mistakes, deepfakes and ‘scheming’

•reduced quality of outputs due to courts’ overreliance on new technologies

•access to justice challenges for people with lower digital skills or experiencing barriers to justice.

3.18We also heard concerns about additional risks:

•improper use resulting in overreliance on AI, inaccurate use and potential infringement of human rights

•lack of transparency about how AI is used and who is responsible for its use

•intellectual property infringements resulting from the use of copyrighted information to develop and train AI models

•undermining of professional obligations if lawyers and experts do not understand the limitations of AI use

•loss of skills and capabilities, through deskilling or replacement of roles

•devaluing human connection where AI tools replace opportunities for court users to interact with court staff, widening the digital divide and increasing distrust in the justice system

•adverse environmental impacts due to increased energy consumption for AI systems

•reduced trust in the justice system and the potential for damage to institutional trust in courts

•risks during implementation due to poor design or poorly planned procurement and management of AI tools.

3.19Building on risks identified in our consultation paper, we heard more about how these risks could impact Victoria’s justice system. These risks are addressed below.

Information security and data privacy risks

3.20We heard extensive concerns about information security and data privacy risks. The Office of the Victorian Information Commissioner suggested these risks are not new but are exacerbated by the speed and scale in which AI technologies analyse information and generate outputs.[29]

3.21Victoria’s courts and tribunals deal with large volumes of confidential, sensitive and personal information. As discussed in our consultation paper, the risk to privacy will depend on what information is shared with AI tools, and how information is processed and stored. Additional risks arise because AI may use data in ways that were not consented to when that data was collected.[30] Security of data is also a concern, cyberattacks or tampering with algorithms could disrupt or influence court operations.[31] In recognition of these risks, the Canadian Judicial Council guidance recommends that courts implement information security systems and protocols, giving special consideration to AI-specific threats.[32]

3.22The Supreme Court highlighted that the use of AI also requires consideration of other legislative obligations that restrict the publication or disclosure of information.[33]

3.23Privacy concerns were also raised in relation to human rights. The Human Rights Law Centre stated that the use of AI technologies may infringe on the right to privacy.[34] The Castan Centre cautioned that privacy risks were evident in even ‘innocuous’ administrative functions, like case management and scheduling. This is because uploading personal information onto an AI tool ‘creates open-ended risks’.[35] Privacy risks that arise from the use of AI is considered later in this chapter and in Chapter 5.

3.24We also heard that entering personal information into public General Purpose AI tools like ChatGPT could breach client confidentiality obligations.[36] The Federation of Community Legal Centres and Justice Connect stated, ‘Confidential or privileged information should never be entered into public tools.’[37]

3.25Our consultation paper outlined an example where the Office of the Victorian Information Commissioner found a child protection worker had used ChatGPT to draft a report for the Children’s Court.[38] This was found to be a serious breach of information privacy principles because it involved inputting personal and sensitive information into ChatGPT.[39]

3.26The use of speech-to-text transcription or translation tools also raises concerns about client confidentiality, privacy and information and data security—particularly where tools use biometric data to identify individual speakers.[40]

3.27These risks are acute for Victoria’s courts and tribunals where there are:

•large volumes of cases[41]

•confidential, sensitive and personal information relating to litigants, defendants and victims[42]

•data management practices that rely on third-party services.[43]

3.28Numerous stakeholders noted that with or without AI, there are always risks with the court management of data.[44] A representative of Cenitex added that information and privacy risks have not changed. Risk is ‘just amplified by the likelihood of it occurring when using AI tools’.[45]

3.29Opportunities for Victoria’s courts and tribunals to address information security, privacy and data management risks are addressed in Chapter 9.

Lack of explainability

3.30‘Explainability’ refers to whether a person can explain how an AI model or tool makes predictions or decisions. AI models are often too complex to easily explain.[46] This is significant for courts and tribunals because procedural fairness requires that people can understand and challenge a decision, or the evidence informing a decision.

3.31In our consultation paper, we highlighted two common barriers to explaining AI systems:

•the technical ‘black box’—many GenAI systems create responses based on statistical calculations so complex that the reasoning for those responses cannot be followed by humans

•commercial barriers—some developers seek to protect commercial advantage by refusing to explain the underlying methods of their AI products to courts.

3.32There are also risks for judicial decision makers when using AI, as they have a duty to provide reasons for decisions. This is discussed further in Chapter 8.[47]

Bias

3.33In our consultation paper, we drew a distinction between data and systems bias. Data (or algorithmic) bias involves bias based on how an AI model has been trained, which can result from a developer’s use of selective or unrepresentative data..[48] Systems (or reinforcing) bias occurs when an AI model uses data that may be correct but reinforces existing underlying societal bias in the data, amplifying negative societal effects over time.[49] This is particularly concerning because bias can be reinforced over time,[50] and there is no straightforward technical solution to address the risk.

3.34A significant concern raised in submissions and consultations was the negative impact of bias on the right to a fair hearing. Human rights bodies and community legal centres expressed strong concerns about the risk of bias in AI-supported decision making where there is no human oversight.[51]

3.35The Human Rights Law Centre said systemic bias was one of the primary risks associated with the use of AI in judicial processes.[52]

3.36The Northern Community Legal Centre described systemic bias in judicial decision-making as a primary concern, noting historical issues with discrimination and systemic bias in courts.[53]

Inaccuracy

3.37Inaccuracy from responses generated by AI tools was a common concern. GenAI tools can provide outputs in a wide range of contexts that are incorrect or made up (hallucinated) but appear convincing, ranging from the summarisation of information in a legal document to legal research.[54]

3.38Public General Purpose AI tools such as ChatGPT are more likely to be inaccurate compared to models that:

a)are trained on subject-specific material (for example legal or organisation-specific large language models)

b)draw on subject-specific material (for example General Purpose AI Models connected to a subject-specific database).[55]

3.39The second method is also called ‘Retrieval Augmented Generation’, which involves legal documents being stored on dedicated databases, allowing responses to be tailored to specific jurisdictions and legal contexts.

3.40Although this is a common way to address inaccuracy, it is important to recognise it does not eliminate these risks.[56] A Stanford University study highlighted that claims about the reliability of some specialised AI products are overstated and that dedicated legal tools can hallucinate on a regular basis. Legal research tools assessed in the study were found to have hallucination rates between 17 and 33 per cent, compared to 43 per cent for GPT-4.[57]

3.41There are now many examples where hallucinated AI responses have been submitted to courts in Australia.[58] In these instances, court submissions have included:

•cases that do not exist

•quotes that exist but are attributable to different cases

•cases that exist but consider different subject matter or have no relevance to the proceeding.[59]

This includes a Victorian matter where fake citations directly caused delays to the conclusion of a criminal hearing before the Supreme Court.[60]

3.42Different AI tools may produce inaccurate responses depending on:

•the type of AI and whether it is suitable for the task[61]

•whether the AI model has continued to be updated[62]

•whether the AI model has been the subject of malicious attacks that impact its behaviour or capability[63]

•the type of data the AI model was trained on (for example, there may be jurisdictional differences if a tool was trained on legal cases from the United States rather than from Australian jurisdictions)[64]

•whether the tool draws on specialised material, such as legal databases to respond to queries (also known as Retrieval Augmented Generation)[65]

•the detail, context and refinement of the prompt.[66]

3.43A research study conducted at the University of Wollongong found that although undergraduate papers generated by GenAI did not outperform the average law student, use of well-designed prompts and fine-tuning enabled some GenAI papers to exceed the average score.[67] Overall, the study noted that GenAI was particularly weak at critical analysis tasks but newer models showed greater accuracy and more structured reasoning.[68]

3.44The risk of inaccuracy was a common concern for Victoria’s courts and tribunals when considering the potential future use of AI tools. Representatives of the Magistrates’ Court stated that the focus of their transcription pilot (discussed in Chapter 2) was to test the accuracy of speech-to-text transcription services.[69]

3.45In addition, we heard about AI ‘scheming’, where certain AI tools strategically pursue goals that diverge from the intentions set by human developers.[70] This can involve a wide range of misleading behaviours, ranging from misrepresenting a response to disabling an oversight mechanism to avoid being shut down.[71] An OpenAI report outlined that this risk is evident in major AI systems, which are good at exploiting loopholes in their reward structure, much like humans.[72]

Lack of reliability

3.46We heard about risks related to reliability and validity. While related to accuracy, these concerns focus on whether the AI tool works as intended and performs reliably and consistently over time.[73] One risk we heard about was ‘model drift’, where training for an AI model is not updated and becomes inaccurate or redundant over time.[74]

3.47We heard that reliability risks may be addressed through in-house and third-party testing, such as testing consistency of outputs with repeated questions or across different situations.[75] We heard that testing reliability of an AI tool is necessary, particularly within the context of a criminal hearing.[76] But the complexity of AI models can raise challenges for demonstrating reliability.

3.48The accuracy and reliability of court-managed AI tools also depends on establishing and maintaining well-structured, well-governed data management systems, consistent with existing data management standards.[77] Some stakeholders said that reliability and accuracy required testing from independent third parties.[78] We also heard that adequate resourcing for the ongoing maintenance of internal data is important.[79]

Access to justice challenges

3.49While some stakeholders saw opportunities to enhance access to justice, there were also concerns that AI use in courts and tribunals may impede access to justice.

3.50Different groups and communities face barriers in their access or ability to use digital technologies. This is commonly referred to as the ‘digital divide’. Digital exclusion is influenced by socio-economic factors such as income and education.[80] This digital divide is also more likely to be experienced by older Australians, First Nations peoples, people with disability and people living in rural or regional areas.[81]

3.51A recurring concern from stakeholders was that the introduction of AI by Victoria’s courts and tribunals could increase an existing digital divide. The Federation of Community Legal Centres and Justice Connect cautioned that, ‘The digital divide remains a challenge, as individuals with limited digital literacy or access may struggle to use AI-powered legal tools effectively’.[82]

3.52The Northern Community Legal Centre highlighted that in Australia:

Those who are lower socioeconomic, living in public housing, those over the age of 75 years old, live remotely, and/or are First Nations people are experiencing stagnant or deteriorating levels of access. These cohorts represent some of those with the highest legal need and high levels of justiciable problems, including family violence.[83]

3.53The Northern Community Legal Centre represent some of those with the lowest access or capacity to use technology.[84] It told us that many victim-survivors dealing with family violence are living in unstable accommodation and do not have access to a reliable internet connection.[85]

3.54We heard that many AI tools are not currently sophisticated enough to negotiate diverse migrant cultural contexts, and that this is often compounded by educational disadvantage.[86] While transcription and translation tools offer opportunities to enhance access to justice, we were also cautioned that certain translation tools:

•are underpinned by the English language and cannot properly translate deep cultural differences[87]

•fail to understand vernacular use of different languages[88]

•may provide translations that do not reflect culturally sensitive experiences of trauma.[89]

3.55A separate access to justice challenge relates to unequal access to high quality AI tools.[90] This issue touches upon the right to a fair hearing and the ‘equality of arms’ principle, which requires parties to a legal proceeding to be ‘treated in a manner ensuring that they have a procedurally equal position to make their case during the whole course of the trial’.[91] Judge Vytautas Mizaras and others have considered that the use of AI may impact on this principle. This could include when certain parties are able to gain an advantage through their special access to technological expertise.[92]

3.56There is a disparity in access to AI tools, with many parts of the legal sector lacking resources to develop AI systems.[93] Some stakeholders stated that AI tools available to self-represented litigants, smaller legal practices and community legal centres are likely to be less precise and reliable than those available to better-resourced organisations.[94]

3.57In contrast, several larger law firms have developed in-house AI tools or invested in specialised legal-specific AI services.[95] While these specialised and often closed tools can reduce inaccuracies and information security concerns, the potential increase in the digital divide is apparent. We explore differences in the risk profiles between these types of AI later in this chapter.

Improper use

3.58Unintended misuse of AI commonly arises when people over rely on AI tools, believing the technology to be infallible and not understanding the limitations.[96] As a result they fail to review AI outputs before relying on them.

3.59Courts and tribunals need to consider not only risks embedded in the AI tool but also the person using the tool. As the Law Institute of Victoria stated:

Members of the LIV note that a potential risk not identified in the Consultation Paper flows from the assumption that all users possess equal knowledge, skills, and understanding of AI technology, and thus the expectation that generative AI outputs will be consistent for all users, irrespective of their level of sophistication. This assumption is unrealistic and may result in unintended consequences.[97]

3.60While unintended misuse of AI tools is a risk that applies to all court users, we heard specific concerns about self-represented litigants relying too heavily on AI tools like ChatGPT.[98] This could lead to inaccurate information provided to courts, which may ‘create further burdens in workload for courts’[99] by increasing court time and resources spent reviewing documents and slowing timely access to justice.[100]

3.61In Queensland, District Court Judge Porter commented that while self-represented litigants do not have the same professional duties as lawyers, this does not give them ‘a free pass to uncritically adopt the output of AI models’ and that there may come a time where a litigant who is reckless in the accuracy of their filings may be found to be in contempt of court.[101]

3.62GenAI use could also result in more and longer AI-generated court documents ‘flooding’[102] the court system.

3.63Improper uses can also occur with the production of fake evidence, such as where synthetic ‘deepfake’ content is created by court users.[103] Chapter 5 considers this topic in further depth.

3.64There were also concerns about improper use by lawyers if limitations of AI are not well understood. The overreliance by lawyers on AI tools can result in poorer quality or inaccurate submissions to courts, delays in resolving matters and other negative impacts upon clients.[104] Risks arise where lawyers and others using AI do not appropriately review and verify AI-generated outputs. This is considered further in Chapters 5 and 10. Some people expressed concerns that AI software vendors were not appropriately alerting lawyers to the risks of inaccuracy from specialised AI legal tools.[105]

3.65Responses by courts and tribunals to the improper use of AI has involved referring lawyers to the relevant professional regulatory body.[106] In one instance, the VLSB+C varied the practising certificate of a lawyer who provided inaccurate citations and summaries to the court generated by AI, preventing him from operating his own practice or handling trust moneys.[107]

Lack of transparency

3.66Transparency is a critical feature of public trust. It requires that organisations provide meaningful information on when an AI tool is used and how its use could affect individuals. Transparency is a principle included in national and international AI regulatory approaches, such as the Australian Government’s AI Ethics Principles.

3.67During consultations there was a strong desire for courts and tribunals to be transparent about their use of AI tools.[108] In Chapter 9, we discuss methods for Victoria’s courts and VCAT to clearly communicate their use of AI.

Intellectual property infringements

3.68General Purpose AI models are trained on large and diverse data sets. They are often developed by ‘scraping’ content directly from the internet, which may include copyrighted material.[109]

3.69Stakeholders were concerned that General Purpose AI models have used copyrighted materials to train their models without permission or payment.[110] Stakeholders argued that Victoria’s courts and VCAT need to ensure compliance with copyright obligations and avoid ‘complicity in potential infringements by AI tools’.[111]

3.70This risk also contributes to transparency issues as there is often a ‘lack of information on what copyright materials had been used to train AI models and how those materials had been accessed’.[112] This can make it difficult to determine if infringement of copyright laws has occurred or to allow affected parties to seek remuneration.[113]

Devaluing human connection

3.71Community legal centres were concerned that the introduction of AI systems in Victoria’s courts and VCAT could entirely replace some human functions and access points.[114] For example, human phone services in courts could be replaced with online chatbots.[115] This was identified as particularly problematic for people with complex needs, as well as older individuals and those with disabilities.[116] The Victorian Advocacy League for Individuals with Disability suggested the introduction of AI tools may discount the importance of social interaction in helping people with a disability to have meaningful experiences of the administration of justice.[117]

3.72Representatives from Eastern Legal Community Centre also highlighted that people who are confident and have the capacity to use technology may get a better outcome or more help compared to those who are less confident or less able to use technology.[118]

Loss of skills and capabilities

3.73The introduction of AI may lead to the deskilling or replacement of some roles or jobs.[119] We heard concerns about the potential for AI transcription tools to replace interpreters over time, and the negative impact on outcomes for court users.[120]

3.74Northern Community Legal Centre stated that the introduction of AI may further reduce perceived staffing needs by courts and tribunals and result in increased pressure on other sector services.[121]

3.75The Office of the Victorian Information Commissioner also cautioned that if an AI tool is used to automate parts of court processes or functions, it is possible that skills and knowledge for completing these processes will be lost. This is a risk if the AI system fails or tasks need to be completed manually.[122]

Reduced trust in the justice system

3.76An important risk relates to the distinctiveness of courts and tribunals as public institutions. In a recent speech about the advent of social media and AI, the Chief Justice of Western Australia explored what makes public trust in the judiciary distinct. He stated trust in any judge is derived from trust in the court as an institution:

our capacity for objectivity, and our human capacity for distinguishing between the real and the artificial, it will be precisely there that our institutional trustworthiness, and the trust upon which our legitimacy rests, may be renewed and in which it may be preserved.[123]

3.77Victoria’s courts and tribunals have responsibilities as public institutions to all Victorians and face significant risks if institutional trust is harmed.[124] As outlined by the Conference of State Court Administrators in the United States:

Public confidence in the courts depends not only on what judges and court administrators know about A I, but also what the public knows about how the courts themselves implement A I systems.[125]

3.78There are reputational risks when court staff and judicial officers use AI. As one submission stated:

The Court can foresee other potential issues if judicial officers were to use AI in judicial decision-making, which would include, broadly, harm to the public trust in judges and courts, and ‘truth decay’ caused by a decline of trust in legal decisions.[126]

3.79These risks are addressed in the guidelines we propose for judicial officers and court staff in Chapters 8 and 9.

Risks during implementation

3.80Resource considerations are important to support the effective implementation of AI systems.[127] For instance, the UK Action Plan refers to the need for sustained funding that ensures AI pilot programs can ‘transition to scalable solutions’.[128] Resources are required to develop skills and expertise within an organisation that can support delivery of AI,[129] from design and procurement through to implementation and eventual decommission.

3.81As considered at paragraph [3.48], resourcing considerations include establishing internal processes to help structure, align and maintain data systems. For example, reliance on custom data sets can minimise errors, but managing custom data is expensive and time consuming, as both digital infrastructure and underlying data require updates and monitoring to maintain data quality. There may also be issues with how AI tools work with the organisation’s existing technology.[130]

3.82The growth in the number and range of AI products will increase the risk of unintended consequences over time. Careful implementation is critical to managing many of the risks that have been identified. Emerging international guidance to courts highlights this view.[131] This was reiterated by the Law Institute of Victoria:

It is crucial that AI is implemented with caution, ensuring fairness, transparency, and human oversight to maintain the integrity of court processes.[132]

3.83We heard that:

•Victoria’s courts and tribunals may need expert advice to ensure procured AI tools can perform as intended[133]

•decisions to procure and use an AI tool should be informed by appropriate risk assessment[134]

•third-party data and information practices may not align with obligations under the Privacy and Data Protection Act 2014 (Vic) (PDP Act) and Public Record Office Victoria standards on record-keeping[135]

•monitoring and assessment should be ongoing[136]

•organisational planning is needed in the event an AI tool fails.[137]

3.84The Human Rights Law Centre stated that ‘AI systems, if poorly designed or implemented, risk undermining procedural fairness and the right to a fair trial’.[138]

Emerging opportunities and risks of AI

3.85The risks and opportunities identified above reflect the current state of AI. The speed and scale of technological change will influence future opportunities and risks. Some existing risks may be addressed by current regulatory approaches. But new uses and risks will also emerge, which may require different regulatory responses.[139]

3.86A representative of the Coronial Council stated:

Given the pace of change, there is a need to future-proof a court’s approach to regulating AI. We must keep up with what will be possible or available and applied in practice as the AI capability evolves.[140]

3.87A range of emerging technological innovations may affect the further use of AI in courts and tribunals. These include:

•Agentic AI—AI tools that not only respond to user prompts but can pursue complex goals or undertake open-ended tasks with limited direct supervision and over long timespans.[141] This could involve a lawyer directing an AI agent to review their work and identify flaws in a court submission. It could involve a legal team allowing an AI agent to listen to internal meetings so the AI agent can propose work allocation or next steps in the management of a case.[142]

•Multimodal AI—Agentic AI tools that understand and adapt different kinds of data, or respond to different types of sensory input, from video, audio, text and images.[143] This could involve a self-represented litigant having a statement they drafted being represented as an AI-based avatar at a court proceeding.[144]

•Neurotechnology—New technologies that interact directly with the brain and can decode or visually project a person’s thoughts.[145] This could involve a new kind of lie detector that monitors brain waves to detect concealed information.[146]

3.88A great deal of public debate is currently focused on the extent of the reasoning capabilities of major AI models and how far this can progress based on current model capability.[147] ‘Reasoning’ in this sense is still not human sentience, but the capacity for AI to step through conclusions to solve problems and include logical references. While the addition of ‘reasoning’ capabilities is useful to substantiate an output, emerging research highlights that these processes still do not reflect human logic,[148] and can create new risks of deception in relation to transparency and trust.

3.89Related to the capacity of AI tools to ‘reason’, Artificial General Intelligence or AGI is often described as a hypothetical stage when AI models finally surpass human intelligence in many or all domains of knowledge.[149] While AGI may seem the subject of science fiction, some leaders within the field suggest AGI may be reached well within the next decade.[150] The consequence of the development of AI with deep reasoning is beyond the scope of this report. However, the development of fully autonomous AI systems may require fundamental reconsideration of regulatory approaches, beyond what has been considered in this report.

3.90Nonetheless, the most significant risk given the pace of technological change is the risk of not acting. This view was put forward by the Federation of Community Legal Centres and Justice Connect:

In our experience, the risks most identified can be easily managed when the right guiding principles and user-centred approaches are adopted. We foresee greater risks in Victorian courts and tribunals not using AI and failing to invest in becoming AI literate, as the use of AI technologies becomes increasingly mainstream as a useful tool for people to understand and navigate the everyday legal problems they face.[151]

Understanding different AI risks

3.91In our consultation paper, we acknowledged distinct opportunities and risks based on:

•the type of AI

•how the AI tool is designed to be used

•whether the AI is used for proper or improper purposes.

3.92Feedback to the Commission emphasised that risks of AI in courts and tribunals are not equal and depend on the following factors:

•Risk based on type of AI—does the AI tool feature GenAI capabilities? Is the AI tool a public tool?

•Risk based on who is using AI—is the user bound by professional obligations?

•Risk based on proximity to the decision stage—what stage or type of matter is the AI tool used or deployed for?

•Risk based on complexity—is the tool applied to consistent, repeatable facts? Does the use require interpretation of law?

Risk based on type of AI

3.93We stated in Chapter 1 that the Commission adopts the OECD’s definition of AI in this report.[152] This definition is broad and incorporates a diverse range of AI tools. As a representative of the Coronial Council stated, defining the type of AI is important:

Where do you define what is and what is not AI? The Grammarly app allows you to improve language. Is it AI or just advanced computation work or a large statistical package? There is a function in MS Word which assists sentence structure. Where do you cross the line and say this is AI?[153]

3.94Different types of AI used in court and tribunal settings carry different types and scale of risk.[154] We heard about specific risks related to:

•Generative AI (GenAI)

•public and closed AI.

What are the risks from Generative AI?

3.95GenAI systems generate content as text, images, music, audio and videos, based on a user’s prompts.[155] New applications of GenAI are observable in agentic AI models and tools.

3.96Machine learning techniques underpin GenAI systems. This refers to the way algorithms find patterns from data and learn from these patterns for future application. This has become so sophisticated that GenAI systems can generate future answers based on the memory of previous interactions and prompts.

3.97GenAI systems do not apply human logic. They generate a response by applying trained machine learning models to new and unseen data.[156] This involves the application of sophisticated calculations based on statistical probability that can predict the next word, phrase or pixel, and are ‘learned’ by analysing a vast store of training data. GenAI models also involve human developers in training the AI model, by reinforcing behaviour towards better and more accurate responses. This is why every response produced by a GenAI tool contains the risk of hallucination.

3.98The scope of GenAI tools is broad and some ‘specialised’ GenAI applications do not share the same problems as public General Purpose AI like ChatGPT.[157]

3.99AI guidelines issued by courts and tribunals often focus on the risk of GenAI without recognising how risks differ within this broad category of AI tools. Issues with the definition of GenAI are reflected in revisions made by the NSW Supreme Court to its Practice Note.[158] Recent changes to the Practice Note exclude and do no regulate kinds of GenAI that:

•correct spelling or grammar

•provide transcriptions or translations

•assist with formatting

•generate chronologies from original source material

•operate as search engines

•operate as dedicated legal research software.[159]

3.100This in part reflects how GenAI capabilities are common in everyday use and that users may not realise they are using AI tools. It may suggest that as GenAI capabilities become more commonplace, the perceived risk profile may be reduced.

3.101While the revised GenAI definition excludes dedicated ‘legal research software’, we note that research studies demonstrate that ‘the hallucination problem persists at significant levels’ for specialised legal research tools.[160]

What is the difference between public vs closed AI?

3.102Even though GenAI underpins many kinds of AI tools, applications and models, different risks arise when comparing the use of public and closed AI tools. We use these terms for this report to distinguish between AI tools, products and services available to the public, compared with those that an organisation (such as a court or law firm) creates or manages.

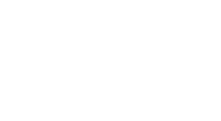

3.103Figure 4 provides real-world examples of public and closed AI. In practice, tools may not always fit precisely within these categories due to complex supply chains and data flows.

3.104Both public and closed AI are comprised of three main types of AI:

•General Purpose AI—has general capabilities that can be applied to many tasks

•Specialised AI—has specific capabilities designed for specific tasks such as legal research

•Embedded AI—refers to AI functionalities built into existing software and products.

3.105General Purpose AI tools such as ChatGPT can undertake a wide variety of tasks. International regulation of AI, such as the implementation of the European Union’s Artificial Intelligence Act 2024, has displayed a focus on placing regulatory controls on General Purpose AI systems.[161]

3.106Specialised AI tools are developed for a specific, fixed and identifiable purpose or function. They are designed and trained to perform specific tasks or to focus on specialised domains of knowledge, such as contract review. Legal research tools such as Lexis+ AI and Westlaw Precision are examples of closed specialised tools.

3.107Embedded AI refers to AI applications integrated into existing software or technology products. The AI capability within these products may not be obvious to a user, or a user may not be notified AI is being used.[162] This type of AI may be used in everyday applications. Examples include voice-activated assistants like Siri or Alexa, or AI Overview which provides a summary of information at the top of each Google Search.

Figure 4: Types of AI

Public AI

3.108‘Public AI’ refers to AI tools that are openly accessible to the public, typically via the internet. Public AI tools are trained on broad, often public, datasets most commonly for general purpose use.

3.109The Judicial Council of California recently stated the phrase ‘public’ would not include ‘any system that the court creates or manages … or any court-operated system the court uses to provide those outside the court with access to court data’.[163] Although not as clearly defined, the term ‘public’ when describing AI use is contained in many court guidelines in Australia and other jurisdictions.[164]

3.110In very limited instances, this definition of public AI may include AI tools developed on a non-commercial basis and made available to the public by experts, lawyers or public institutions using past reports, judgments or precedent letters.

Closed AI

3.111‘Closed AI’ is defined in contrast to public AI. Closed AI tools are generally not openly accessible to the public and information used in closed AI tools remain within a controlled environment. When an AI tool is ‘closed’ there are controls to reduce risks related to privacy, or confidentiality settings that protect information from being made publicly available or used to train the AI tool.

3.112The phrase ‘closed AI’ was used by High Court Chief Justice Stephen Gageler in a recent interview,[165] and the phrase ‘closed set’ was used by Justice Needham in a recent speech.[166] In our consultations, we often heard the phrase ‘closed system’.[167] The AI Guidance from the US National Centre for State Courts, uses the phrase ‘closed AI model’.[168]

3.113We note the Office of the Victorian Information Commissioner used the term ‘enterprise’ GenAI tools, characterising certain AI tools that are ‘approved and managed by organisations and operate in secured environments’.[169] However, this phrase is more commonly used for AI tools used inside an organisation or ‘enterprise’, such as Microsoft 365 Copilot.

3.114The concept of ‘closed AI’ is different from ‘closed source’. The phrase ‘closed source AI’ is used in some court guidelines, including the NSW Supreme Court’s AI Practice Note.[170] In our consultation paper, we highlighted the difference between open and closed source referred to whether the underlying architecture of the AI tool is freely available.

3.115Features of closed AI tools typically include:

•Some mitigation against privacy risks—as control of closed AI tools may be ‘ringfenced’, meaning that data uploaded to the AI tool can be isolated, the AI tool can be modified to ensure user prompts are not remembered, or to not allow data that is shared to train the underlying model.[171]

•Stronger compliance with legal obligations than public AI—this may occur through contractual agreement, although clear contractual terms are necessary to improve safety.[172]

3.116For instance, the Office of Public Prosecutions referred to its concerns with the use of public AI systems for legal work by staff, which in part led to their pilot of a closed AI tool.[173] The Office of the Victorian Information Commissioner warned that using sensitive or private information in a public AI tool would be a breach of privacy legislation.[174]

3.117In Figure 4, we distinguish between two versions of Copilot. Bing Copilot operates as a public General Purpose AI tool and shares all the risks of other public AI tools. In contrast, Microsoft 365 Copilot is a secure version of Copilot. This means it can be ringfenced and it can forget user prompts, but use of this tool requires a subscription.

3.118Hallucinations will still occur on closed AI tools, although the likelihood of inaccuracy may be reduced due to more targeted training data, such as through Retrieval Augmented Generation.[175]

3.119Potential users of closed AI may include members of the public, not just those employed by a court or legal practice. Justice Connect’s Smart Assist AI is an example of a closed AI tool available to the public.[176]

3.120A further consideration for the use of closed AI systems relates to data residency which relates to ‘the physical or geographical location of an organization’s data’.[177] The information entered into a public AI tool is likely to be stored outside Victoria, and in many cases, in jurisdictions that do not have information privacy and information security laws equivalent to the PDP Act. This may contravene Information Privacy Principle 9, relating to transborder data flows.[178]

3.121Closed AI systems are an important consideration for court uses of AI moving forward.[179] As stated by a representative of the Federal Circuit and Family Court:

Most tools currently sit on open-source platforms, which poses privacy risks … [a] priority is to explore whether and how AI can be piloted within an in house closed model which ensures that highly sensitive information is retained within a secure walled garden.[180]

3.122The cost of implementing AI is important to take into consideration. Funding is required to develop, procure and maintain AI tools and costs will vary significantly depending on the type and scope of the tool.[181] For the deployment of an AI tool to remain safe, closed AI systems will incur costs along an AI tool’s lifecycle, from design to deployment, from training to monitoring.[182]

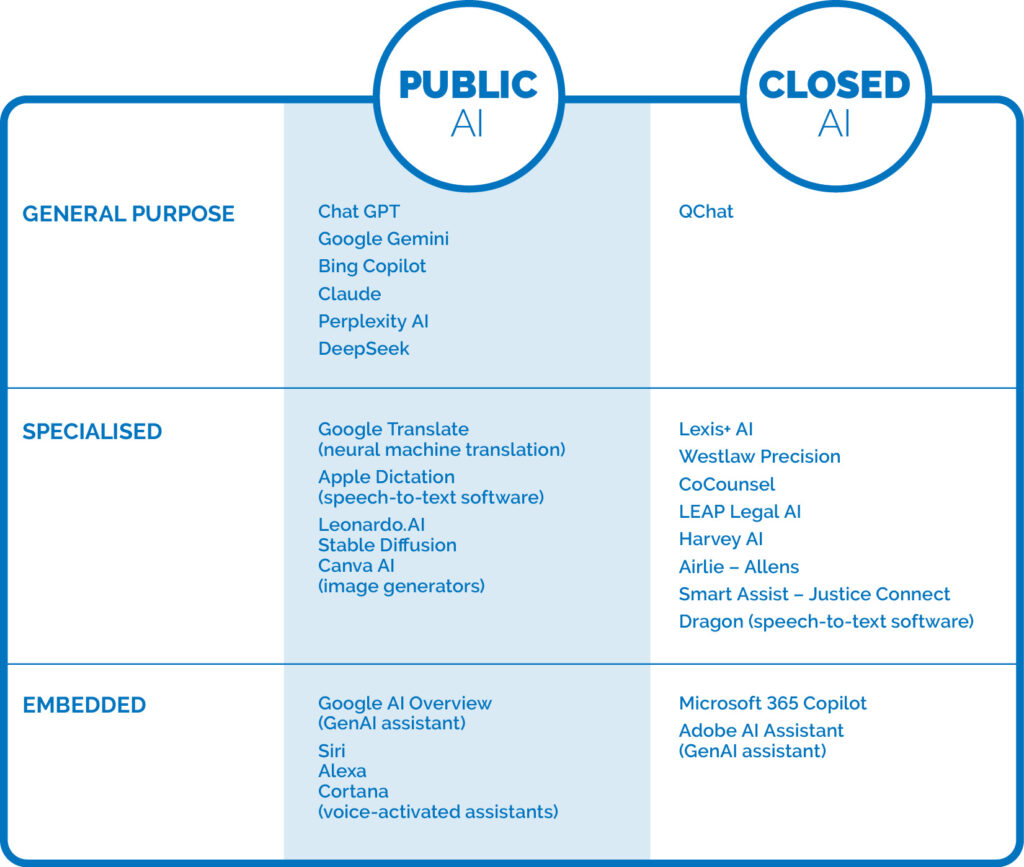

Differences across types of closed AI

3.123The benefits and limitations of the different types of AI are illustrated in Figure 5. In this section we focus on three types of closed AI tools:

•procured tools

•developed tools

•hybrid tools.

3.124Understanding risks associated with different types of closed AI can assist Victoria’s courts and tribunals in assessing and mitigating risk.[183] The three identified types of closed AI can often be differentiated by three factors:

•accessibility—whether access is provided to subscribers, authorised user or to a target audience

•ownership—whether an AI tool is owned by a third party or by the authorising organisation itself

•customisation—the extent to which an AI can be tailored through fine-tuning or systems integration.

Figure 5: Pros and Cons of types of AI

3.125Procured (or off-the-shelf) tools refer to AI products licensed from a third party. These generally cannot usually be customised. Access to off-the-shelf solutions is usually subscriber-based. Many are hosted on cloud services and do not require internal technical support for the AI tool to properly function.[184]

3.126Developed (or custom-built) tools are built ‘in-house’, meaning built within the organisation wishing to use them. They do not formally involve a third party and are tailored to the organisation’s goals, requirements and data systems.[185] The key benefit is that controls and testing are potentially in place to protect the owner. While developed tools can reduce some risks, they often require internal technical expertise to maintain and can be costly to develop.[186]

3.127Hybrid tools utilise pre-built models but can be tailored in some ways.[187] Often these tools are developed in partnership with a third party. Implementation of a hybrid AI tool does not usually require technical expertise internal to the organisation. Hybrid tools might enable organisations to specify compliance requirements through agreement, such as where data is stored.

3.128For both procured and hybrid tools contractual obligations should be carefully considered.[188] The Public Record Office Victoria noted that:

If there are decisions being undertaken by third party suppliers … it requires strong contractual arrangements to make sure data and information records are created and managed appropriately.[189]

3.129There are risks that contractual assurances made by commercial vendors are inaccurate or difficult to monitor, particularly around legal compliance with privacy laws.[190] We heard from some stakeholders that it may be effectively impossible in many instances to demonstrate or test data flows, including privacy requirements for personal data to remain in a certain jurisdiction. Representatives of the Office of the Victorian Information Commissioner highlighted:

In one instance, a Victorian Government department sought to get the Commonwealth e-Safety Commissioner to remove data used by a US company (OpenAI). Open AI declined to regress the model, which would be a very costly exercise for any company. These kinds of technical problems are almost impossible to remediate.[191]

3.130The significance of maintaining ‘data sovereignty’ by keeping data in Australia was considered by Court Services Victoria.[192] We heard from other stakeholders that developers need to be transparent about how AI systems are built.[193]

3.131Victoria’s courts and tribunals should consistently assess whether privacy and security by design should be adopted when exploring AI solutions. ‘By design’ means embedding data privacy and information security considerations prior, during and after the lifecycle of any specific AI tool. This could include establishing internal privacy policies, conducting privacy impact assessments and considering whether appropriate data systems are in operation before an AI solution is considered.[194] It could also mean determining limits on the kinds of data collected, how that data is used and destroyed.[195]

3.132In terms of security by design, this would mean establishing how organisational processes mitigate against cybersecurity breaches.[196] We refer to the need to consider privacy and security by design approaches in our recommended AI assurance framework for Victoria’s courts and VCAT in Chapter 9.

Risks based on who is using AI

3.133We heard that there may be different risk levels based entirely on who is using AI within courts and tribunals such as:

•judicial officers

•court and tribunal staff

•lawyers

•experts and witnesses

•litigants represented and self-represented.

Professional obligations

3.134Different court users are bound by different legal and professional obligations. Lawyers are required to comply with professional obligations. These obligations may assist in mitigating some risks of AI use. But there were different views about whether existing professional obligations are sufficient to shape behaviours and compel compliance by lawyers and expert witnesses.[197] This is discussed further in Chapter 5.

Self-represented litigants

3.135While there are specific benefits identified for self-represented litigants using AI, there are also additional risks. Self-represented litigants who are not lawyers are not subject to professional obligations and may be less equipped to check the accuracy of AI outputs.[198]

3.136We heard that self-represented litigants needed communication and training about potential risks of AI use.[199] The Castan Centre stated:

For self-represented litigants the use of generative AI technologies might represent an opportunity to ‘level the playing field’ by deploying such technologies in the drafting of documents, the writing of submissions, and the like. Courts have already moved to regulate the use of such technologies. But there are recognised problems with legal AI models generating inaccurate information or hallucinating content, which may create further burdens in workload for courts.[200]

Risks based on proximity to decision

3.137The Judicial College of Victoria told us that risk increases the closer the use of an AI tool comes to judicial decision-making.[201] A similar perspective focused on when AI was used in relation to a court hearing. It was suggested that risk could be classified as increasing the closer the use was to the hearing stage. That is, risks in preparing for a hearing could be seen as different to the risk associated with use after a hearing has occurred.[202] We also heard that risk could be influenced by whether there are opportunities for human review of an AI’s output. The Victorian Bar suggested the greatest benefit for AI was generally at pre-trial and preparatory stages.[203]

Risks based on decision complexity

3.138Risk was also different based on whether AI was being used for complex or straightforward issues. Courts and others identified benefits for the use of AI in relation to high-volume, low-risk decisions,[204] particularly for cases where there is limited judicial discretion.[205]

3.139We also heard that each jurisdiction determines cases with different levels of complexity. As the Coroners Court stated:

In considering the risks and benefits of using AI in Victoria’s courts and tribunals, this must be put in the context of the functions and purposes of the relevant court or tribunal. The opportunities and risks will differ between jurisdictions, and any framework or regulatory mechanism must take into account these jurisdictional differences.[206]

Other high-level risks

3.140We asked what might constitute high-level or high-impact risks in courts or tribunals. The most common high-level risk was use of AI for judicial decision-making, with many stating this should be prohibited.[207] Other high-level risks included where the use of AI involves:

•a deprivation of liberty[208]

•sensitive information or information dealing with marginalised people[209]

•administrative decisions[210]

•biometrics such as live facial recognition technology[211]

•AI tools, models and systems that are not transparent (such as the black box problem), in circumstances where accuracy is required[212]

•reoffending risk prediction tools[213]

•provision of legal advice.[214]

3.141We note some existing laws already recognise the use of reoffending prediction tools as high-risk. For instance, the Children, Youth and Families Act 2005 (Vic) does not permit the admissibility of evidence which uses a score, assessment or rating related to a child’s risk of reoffending.[215]

3.142Some considered that any court use of GenAI may be considered high-risk where there is limited human oversight.[216] Others stated any GenAI may become a high-level risk if improperly used, for example, inputting personal or sensitive information into ChatGPT.[217] The Human Rights Law Centre called for all AI systems potentially used by Victoria’s courts and tribunals that produce discriminatory outcomes or contain systemic biases to be prohibited.[218]

Responding to different AI risks

3.143Our recommendations are informed by opportunities and risks described in this chapter, and recognise that risks differ across:

•the type of AI used

•who is using AI

•how AI is used.

3.144In this report, we make recommendations in relation to:

•principles for AI use in courts and tribunals (Chapter 6)

•guidelines to advise court users, judicial officers and court staff about risks and safe use of AI in Victoria’s courts and VCAT (Chapters 7 and 8)

•governance bodies, policies and an AI assurance framework to guide decisions for the of AI use in Victoria’s courts VCAT (Chapter 9).

3.145In those chapters we propose that courts clearly define:

•GenAI

•Public and closed AI.

3.146We also consider different scales of risk depending on the court user and whether they have professional obligations to courts and tribunals.

-

Submissions 6 (Victorian Legal Services Board and Commissioner), 7 (Dr Natalia Antolak-Saper), 12 (Victoria Legal Aid), 14 (Centre for Artificial Intelligence and Digital Ethics, The University of Melbourne), 16 (Law Institute Victoria), 19 (Juries Commissioner), 23 (Victorian Bar Association), 24 (County Court of Victoria), 26 (Supreme Court of Victoria), 27 (Federation of Community Legal Centres and Justice Connect). Consultations 5 (Victorian Bar Association), 15 (Magistrates’ Court of Victoria), 24 (Victorian Advocacy League for Individuals with Disability), 30 (Eastern Community Legal Centre).

-

Submissions 4 (Coroners Court of Victoria), 8 (Damian Curran), 12 (Victoria Legal Aid), 16 (Law Institute Victoria), 19 (Juries Commissioner), 23 (Victorian Bar Association), 24 (County Court of Victoria), 25 (Court Services Victoria), 26 (Supreme Court of Victoria), 27 (Federation of Community Legal Centres and Justice Connect). Consultations 4 (Victorian Legal Services Board and Commissioner), 6 (Office of Public Prosecutions), 25 (Microsoft).

-

Submission 4 (Coroners Court of Victoria).

-

Submissions 6 (Victorian Legal Services Board and Commissioner), 8 (Damian Curran). Consultations 26 (inTouch Multicultural Centre Against Family Violence), 35 (Victoria Legal Aid).

-

See also Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite , UN Doc A/80/169 (16 July 2025) 7–9, 11 <https://docs.un.org/en/A/80/169>.

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Submission 19 (Juries Commissioner).

-

Submissions 7 (Dr Natalia Antolak-Saper), 24 (County Court of Victoria). Consultation 13 (Federal Circuit and Family Court of Australia).

-

Nigel J Balmer et al, Everyday Problems and Legal Need (The Public Understanding of Law Survey (PULS) Vol.1, Victorian Law Foundation, 2023) 10 <https://puls.victorialawfoundation.org.au/publications/everyday-problems-and-legal-need>.

-

Submissions 6 (Victorian Legal Services Board and Commissioner), 14 (Centre for Artificial Intelligence and Digital Ethics, The University of Melbourne).

-

Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 11–12 <https://docs.un.org/en/A/80/169>.

-

Submissions 4 (Coroners Court of Victoria), 26 (Supreme Court of Victoria), 27 (Federation of Community Legal Centres and Justice Connect). See also Conference of State Court Administrators (COSCA), Generative AI & the Future of the Courts: Responsibilities and Possibilities (Policy Paper, National Center for State Courts, August 2024) <https://www.ncsc.org/resources-courts/generative-ai-future-courts>.

-

Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 19 <https://docs.un.org/en/A/80/169>.

-

Submission 4 (Coroners Court of Victoria). Also Submission 25 (Court Services Victoria). The role of AI in reducing staff access to traumatic materials was also referred to by Consultation 6 (Office of Public Prosecutions).

-

Submission 19 (Juries Commissioner).

-

Sophie Farthing et al, Human Rights and Technology (Final Report, Australian Human Rights Commission, 2021) 92 <https://humanrights.gov.au/our-work/technology-and-human-rights/projects/final-report-human-rights-and-technology>.

-

Submission 10 (Castan Centre for Human Rights Law, Monash University).

-

Productivity Commission, Interim Report – Harnessing Data and Digital Technology (Report, August 2025) 105 <https://www.pc.gov.au/inquiries/current/data-digital/interim>.

-

Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 12 <https://docs.un.org/en/A/80/169> suggested AI may be used to support the right to assistance from a lawyer working in legal aid or a community legal centre by reducing their time engaged in administrative matters.

-

Submission 17 (Office of Public Prosecutions).

-

Consultation 4 (Victorian Legal Services Board and Commissioner).

-

Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 19 <https://docs.un.org/en/A/80/169>.

-

Ministry of Justice (UK), AI Action Plan for Justice (Policy Paper, 31 July 2025) <https://www.gov.uk/government/publications/ai-action-plan-for-justice/ai-action-plan-for-justice>.

-

HM Courts & Tribunals Service, ‘Modernising Courts and Tribunals: Benefits of Digital Services’, GOV.UK (Web Page, 24 March 2025) <https://www.gov.uk/guidance/modernising-courts-and-tribunals-benefits-of-digital-services>.

-

Supreme Court Singapore, A Future-Ready Judiciary: Supreme Court Annual Report 2017 (Report, 2017) 7 <https://www.judiciary.gov.sg/docs/default-source/publication-docs/supreme_court_annual_report_2017.pdf?sfvrsn=bf9a0805_4>; Justice Ming explored potential AI uses in pre hearing and post hearing phases based on the Technology Blueprint developed by the Taskforce: Justice Chua Lee Ming, ‘Technology in the Singapore Courts’ (Speech, 2nd China-ASEAN Justice Forum, Singapore, 8 June 2017) 5. 6.

-

Office of the Chief Justice of New Zealand, Digital Strategy for Courts and Tribunals (Report, March 2023) 32 <https://www.courtsofnz.govt.nz/assets/7-Publications/2-Reports/20230329-Digital-Strategy-Report.pdf>.

-

Submissions 16 (Law Institute Victoria), 27 (Federation of Community Legal Centres and Justice Connect). See also Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 10 <https://docs.un.org/en/A/80/169>.

-

Submission 26 (Supreme Court of Victoria).

-

Submission 5 (Office of the Victorian Information Commissioner).

-

Submissions 5 (Office of the Victorian Information Commissioner), 6 (Victorian Legal Services Board and Commissioner).

-

Submission 5 (Office of the Victorian Information Commissioner). Consultations 20 (AI for Law Enforcement and Community Safety Lab), 30 (Eastern Community Legal Centre).

-

Canadian Judicial Council, Guidelines for the Use of Artificial Intelligence in Canadian Courts (Guidelines, September 2024) 8 <https://cjc-ccm.ca/sites/default/files/documents/2024/AI%20Guidelines%20-%20FINAL%20-%202024-09%20-%20EN.pdf>.

-

Submission 26 (Supreme Court of Victoria).

-

Submission 15 (Human Rights Law Centre).

-

Submission 10 (Castan Centre for Human Rights Law, Monash University).

-

Submissions 5 (Office of the Victorian Information Commissioner), 20 (Deakin Law Clinic).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Office of the Victorian Information Commissioner (OVIC), Investigation into the Use of ChatGPT by a Child Protection Worker (Report, September 2024) <https://ovic.vic.gov.au/regulatory-action/investigation-into-the-use-of-chatgpt-by-a-child-protection-worker/>.

-

Ibid.

-

Andreas Nautsch et al, ‘Preserving Privacy in Speaker and Speech Characterisation’ (2019) 58 Computer Speech & Language 441, 448 <https://www.sciencedirect.com/science/article/pii/S0885230818303875>.

-

Consultation 6 (Office of Public Prosecutions).

-

Submission 24 (County Court of Victoria). Consultation 28 (Monash University Digital Law Group).

-

Consultations 14 (Office of the Victorian Information Commissioner), 21 (Public Record Office Victoria).

-

Submissions 10 (Castan Centre for Human Rights Law, Monash University), 12 (Victoria Legal Aid). Consultations 14 (Office of the Victorian Information Commissioner), 27 (UNSW’s Centre for the Future of the Legal Profession and Professor Lyria Bennett Moses).

-

Consultation 29 (Cenitex).

-

Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 12–13 <https://docs.un.org/en/A/80/169>.

-

Wainohu v New South Wales [2011] HCA 24; (2011) 243 CLR 181, [54].

-

Shahriar Akter et al, ‘Algorithmic Bias in Data-Driven Innovation in the Age of AI’ (2021) 60 International Journal of Information Management 102387, 1–2 <https://linkinghub.elsevier.com/retrieve/pii/S0268401221000803>; Sophie Farthing et al, Human Rights and Technology (Final Report, Australian Human Rights Commission, 2021) 106–7 <https://humanrights.gov.au/our-work/technology-and-human-rights/projects/final-report-human-rights-and-technology>; Paul W Grimm, Maura R Grossman and Gordon V Cormack, ‘Artificial Intelligence as Evidence’ (2021) 19(1) Northwestern Journal of Technology and Intellectual Property 9, 42.

-

Centre for Data Ethics and Innovation (CDEI), Review into Bias in Algorithmic Decision-Making (Report, November 2020) 26, 28, 100 <https://assets.publishing.service.gov.uk/media/60142096d3bf7f70ba377b20/Review_into_bias_in_algorithmic_decision-making.pdf>.

-

Submissions 15 (Human Rights Law Centre), 18 (Northern Community Legal Centre).

-

Submissions 12 (Victoria Legal Aid), 15 (Human Rights Law Centre). Consultation 8 (Federation of Community Legal Centres Workshop).

-

Submission 15 (Human Rights Law Centre).

-

Submission 18 (Northern Community Legal Centre).

-

Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 9, 16–17 <https://docs.un.org/en/A/80/169>.

-

Submission 14 (Centre for Artificial Intelligence and Digital Ethics, The University of Melbourne); Ivan Belcic and Cole Stryker, ‘RAG vs. Fine-Tuning’, IBM Think (Web Page, 14 August 2024) <https://www.ibm.com/think/topics/rag-vs-fine-tuning>. For a comparison of the impact of fine-tuning vs Retrieval Augmented Generation techniques on accuracy see Oded Ovadia et al, ‘Fine-Tuning or Retrieval? Comparing Knowledge Injection in LLMs’ (2024) arXiv:2312.05934v3 [cs.AI] <http://arxiv.org/abs/2312.05934>.

-

Michael Legg, Vicki McNamara and Armin Alimardani, ‘The Promise and the Peril of the Use of Generative Artificial Intelligence in Litigation’ (2025) 48(4) University of New South Wales Law Journal (forthcoming)’ 5–6 <https://www.austlii.edu.au/cgi-bin/viewdoc/au/journals/UNSWLRS/2025/23.html>.

-

Varun Magesh et al, ‘Hallucination-Free? Assessing the Reliability of Leading AI Legal Research Tools’ (2025) 22(2) Journal of Empirical Legal Studies 216, 216, 225 <https://onlinelibrary.wiley.com/doi/10.1111/jels.12413>.

-

See Dayal [2024] FedCFamC2F 1166; Murray on behalf of the Wamba Wemba Native Title Claim Group v State of Victoria [2025] FCA 731; Bottrill v Graham & Anor (No 2) [2025] NSWDC 221; Nikolic & Anor v Nationwide News Pty Ltd & Anor [2025] VSCA 112; Wang v Moutidis [2025] VCC 1156; Kaur v RMIT [2024] VSCA 264; Luck v Secretary, Services Australia [2025] FCAFC 26; Valu v Minister for Immigration and Multicultural Affairs (No 2) [2025] FedCFamC2G 95; Handa & Mallick [2024] FedCFamC2F 957; JNE24 v Minister for Immigration and Citizenship [2025] FedCFamC2G 1314; JML Rose Pty Ltd v Jorgensen (No 3) [2025] FCA 976; Finch v Heat Group Pty Ltd [2024] FedCFamC2G 161; May v Costaras [2025] NSWCA 178; Weedbrook v Partlin [2024] QDC 194; Ivins v KMA Consulting Engineers Pty Ltd & Ors [2025] QIRC 141; QWYN and Commissioner of Taxation [2025] ARTA 83; LJY v Occupational Therapy Board of Australia [2025] QCAT 96; Lakaev v McConkey [2024] TASSC 35; Director of Public Prosecutions (ACT) v Khan [2024] ACTSC 19.

-

Chief Justice Bell, ‘Change at the Bar and the Great Challenge of Gen AI’ (Speech, Address to the Australian Bar Association, Sydney, 29 August 2025) 22–29 <https://inbrief.nswbar.asn.au/posts/13dbc1d59f076b32283b003eb800f0de/attachment/BellCJ-ABA-20250829.pdf>.

-

Director of Public Prosecutions v GR [2025] VSC 490, [72]-[80].

-

Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 9 <https://docs.un.org/en/A/80/169>; Tambiama Madiega, General-Purpose Artificial Intelligence (PE 745.708, European Parliamentary Research Service, March 2023) 2; Jose Hernandez-Orallo, ‘Caveats and Solutions for Characterising General-Purpose AI’ in ECAI 2024 (IOS Press, 2024) 2, 3; Jan Kocoń et al, ‘ChatGPT: Jack of All Trades, Master of None’ (2023) 99 Information Fusion 101861, 2 <https://www.sciencedirect.com/science/article/pii/S156625352300177X>.

-

Donald R Polaski and Marissa J Brienza, ‘Managing AI: Risks and Opportunities’ (2023) 12(7) PM World Journal, 6–7 <https://pmworldjournal.com/article/managing-ai-risks-and-opportunities>.

-

Anthony M Barrett et al, AI Risk-Management Standards Profile for General-Purpose AI Systems (GPAIS) and Foundation Models (Report, Center for Long-Term Cybersecurity, UC Berkeley, November 2023) 7, 12 <https://cltc.berkeley.edu/wp-content/uploads/2023/11/Berkeley-GPAIS-Foundation-Model-Risk-Management-Standards-Profile-v1.0.pdf>.

-

Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 9 <https://docs.un.org/en/A/80/169>; Xinyue Shen et al, ‘In ChatGPT We Trust? Measuring and Characterizing the Reliability of ChatGPT’ (2023) arXiv:2304.08979v2 [cs.CR]:1-21, 12 <http://arxiv.org/abs/2304.08979>.

-

Varun Magesh et al, ‘Hallucination-Free? Assessing the Reliability of Leading AI Legal Research Tools’ (2025) 22(2) Journal of Empirical Legal Studies 216 <https://onlinelibrary.wiley.com/doi/10.1111/jels.12413>.

-

Jan Kocoń et al, ‘ChatGPT: Jack of All Trades, Master of None’ (2023) 99 Information Fusion 101861, 12–15 <https://www.sciencedirect.com/science/article/pii/S156625352300177X>; Xinyue Shen et al, ‘In ChatGPT We Trust? Measuring and Characterizing the Reliability of ChatGPT’ (2023) arXiv:2304.08979v2 [cs.CR]:1-21, 2, 7 <http://arxiv.org/abs/2304.08979>.

-

Armin Alimardani, ‘Generative Artificial Intelligence vs. Law Students: An Empirical Study on Criminal Law Exam Performance’ (2024) 16(2) Law, Innovation and Technology 777, 808 <https://doi.org/10.1080/17579961.2024.2392932>.

-

Ibid 811–813.

-

Consultation 15 (Magistrates’ Court of Victoria).

-

Submission 11 (Dr Armin Alimardani).

-

Alexander Meinke et al, ‘Frontier Models Are Capable of In-Context Scheming’ (2025) arXiv:2412.04984v2 [cs.AI]:1-72, 7–9 <http://arxiv.org/abs/2412.04984>.

-

‘Detecting Misbehavior in Frontier Reasoning Models’, OpenAI (Web Page, 10 March 2025) <https://openai.com/index/chain-of-thought-monitoring/>.

-

National Institute of Standards and Technology (NIST), Artificial Intelligence Risk Management Framework (AI RMF 1.0) (NIST AI 100-1, U.S. Department of Commerce, January 2023) 13 <http://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.100-1.pdf>.

-

Consultation 23 (Dr Fabian Horton). See also Donald R Polaski and Marissa J Brienza, ‘Managing AI: Risks and Opportunities’ (2023) 12(7) PM World Journal 6–7 <https://pmworldjournal.com/article/managing-ai-risks-and-opportunities>.

-

Consultation 20 (AI for Law Enforcement and Community Safety Lab).

-

Consultation 5 (Victorian Bar Association).

-

Submission 10 (Castan Centre for Human Rights Law, Monash University). Consultations 14 (Office of the Victorian Information Commissioner), 20 (AI for Law Enforcement and Community Safety Lab), 21 (Public Record Office Victoria).

-

Submissions 15 (Human Rights Law Centre), 20 (Deakin Law Clinic). Consultation 20 (AI for Law Enforcement and Community Safety Lab).

-

Consultation 14 (Office of the Victorian Information Commissioner). Also Consultation 23 (Dr Fabian Horton) who emphasised you cannot ‘set and forget’ organisational supports for AI systems, for instance, to compensate against the risk of ‘model drift’.

-