6. Principles to guide the safe use of AI in courts and tribunals

Overview

•There is broad support for principles to be used to guide the safe use of AI in Victoria’s courts and VCAT.

•We propose principles to guide safe use of AI in Victoria’s courts and VCAT, drawing on principles relating to AI, justice and human rights.

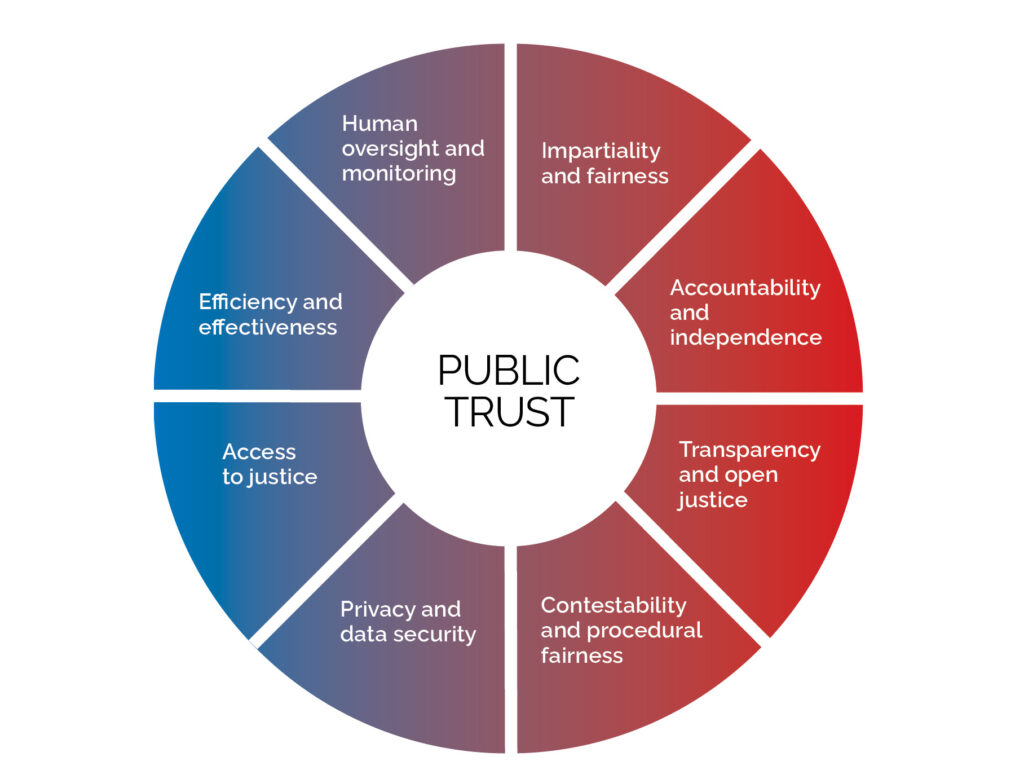

•Principles for Victoria’s courts and VCAT should include:

–impartiality and fairness

–accountability and independence

–transparency and open justice

–contestability and procedural fairness

–privacy and data security

–access to justice

–efficiency and effectiveness

–human oversight and monitoring.

Developing AI principles for courts and VCAT

6.1Our terms of reference ask us to develop principles to guide the safe use of AI in Victoria’s courts and tribunals.

6.2We put forward eight draft principles for regulating AI in our consultation paper. The draft principles were drawn from:

•common AI regulatory principles based on Australian and international sources[1]

•core principles of justice relevant to courts and tribunals based on the judicial values outlined in AI Decision Making and the Courts.[2]

6.3International courts and tribunals have also developed a range of AI guidelines and policies that incorporate principles specific to the justice system. While each set of principles is unique, there are some common elements in the principles adopted across various courts and tribunals. Appendix D contains examples of principles that have been used to regulate AI in society broadly and in courts and tribunals.

Stakeholder views on the draft principles

6.4The principles proposed in our consultation paper enjoyed broad support amongst stakeholders:

•no-one expressed a view against the adoption of the proposed principles

•many stakeholders supported the principles with no change[3]

•some others agreed that the principles were a good starting point but suggested additions or clarifications.[4]

6.5We consistently heard that the flexibility of principles is helpful for responding to the rapid pace of change in AI’s evolution. The Victorian Bar Association considered principles would be useful as a starting point for developing guidelines, practice notes and rules.[5]

6.6We heard that the principles are high level enough to assist in shaping the thinking of courts and tribunals.[6] While nuanced, they are not overly restrictive.[7]

6.7This approach contrasts with a legislation-based approach, which may take time to amend and would inevitably lag behind technology, as discussed in Chapter 5. Legislation can also become obsolete where technologies and the policy environment are quickly changing.[8] While a principle-based approach to AI in courts and tribunals received broad support, there was very little support for an AI-specific legislative approach for Victoria’s courts and VCAT.[9]

6.8Some stakeholders supported the principles but suggested modifications to promote reliable governance practices and inform assessment of AI tools.[10] Suggestions for change centred on:

•inserting a human rights lens and prioritising public interest[11]

•accounting for the environmental cost of AI use[12]

•incorporating an Indigenous Data Sovereignty perspective[13]

•broadening the concept of efficiency beyond cost savings, to encompass improved outcomes and access to justice for court users.[14]

6.9We also heard public trust was an important anchoring concept for the principles. Some considered it an additional principle to capture issues that do not fit easily with the other principles.[15]

6.10Others viewed public trust as an overarching concept that was the product of the principles.[16] We have adopted that view in this chapter.

6.11Public trust is fundamental to maintaining confidence that courts and tribunals are operating fairly. Where feasible, Victoria’s courts and VCAT should incorporate the principles when engaging with AI tools. This will provide a foundation for maintaining public trust and confidence in courts and VCAT, which is fundamental to the rule of law.

6.12We heard from stakeholders that the combined principles were important to maintain the rule of law. The use of AI in Victoria’s courts and VCAT, including by judicial officers, must be consistent with ‘upholding the rule of law’ and the rights and freedoms of individuals.[17] Contestability and procedural fairness were identified as crucial mechanisms to maintain the rule of law.[18]

6.13While there was strong support for the principles, we also heard that to be useful, principles must be implementable. Some terms used are complex and have different technical and legal meanings.[19] Others are so broad that, without further guidance, they may be meaningless.

6.14The Supreme Court highlighted that the relevance of each principle may apply differently to:

•functions relating to the administration of courts

•administrative functions that are connected or adjacent to judicial functions

•judicial functions.[20]

6.15Principles alone may not be effective in influencing or changing people’s behaviour.[21] We heard that principles need to be enlivened with practical examples.[22] While this chapter sets out overarching principles, we discuss in later chapters how to give effect to principles in practical guidelines and governance processes.

Principles to guide AI use in courts and tribunals

6.16The Commission recommends the following principles outlined in Figure 6 to guide the use of AI in courts and tribunals.

6.17We discuss how the principles have been updated since our consultation paper was released to reflect feedback and the rationale behind updating each principle.

Figure 6. Principles to guide AI use in Victoria’s courts and tribunals

Principle 1: Impartiality and fairness

The use of AI should not undermine applicable human rights, including the right to equality before the law and the right to a fair hearing before an impartial decision maker. Reasonable steps should be taken throughout the lifecycle of AI systems to ensure they do not create, reproduce or exacerbate bias or discrimination and that they support procedural fairness.

6.18Following our consultation paper, stakeholders identified opportunities to clarify the principle of impartiality and fairness. The Centre for the Future of the Legal Profession and UNSW Law and Justice raised concerns that, ‘While most people would agree that any AI tools should be “fair” the precise content of fairness is challenging to define.’[23]

6.19One way to address this uncertainty is to clearly align this principle with existing human rights.[24] We heard from stakeholders that the regulation of AI in Victoria’s courts and VCAT could be supported by establishing clear links to human rights.[25] Human rights are featured in several existing AI ethical frameworks.[26] The Australia New Zealand Responsible and Ethical Artificial Intelligence Framework states that, ‘Police organisations should design and/or use AI systems in a way that respects equality, fairness and human rights.’[27]

6.20Principle 1 has been updated to capture the importance of ensuring the use of AI does not infringe the right to a fair hearing and to equality before the law.

6.21The right to a fair hearing is protected by the Victorian Charter of Human Rights and Responsibilities Act 2006 (Vic) (the Charter).[28] Section 24 of the Charter establishes that:

A person charged with a criminal offence or a party to a civil proceeding has the right to have the charge or proceeding decided by a competent, independent and impartial court or tribunal after a fair and public hearing.[29]

6.22The Charter also recognises an individual’s right to equality before the law. Section 8 of the Charter states:

Every person is equal before the law and is entitled to the equal protection of the law without discrimination and has the right to equal and effective protection against discrimination.[30]

6.23Victoria’s courts and VCAT have obligations under the Charter to consider and act compatibly with these rights. The right to equality before the law and a fair hearing is also protected in international law such as Article 14 of the International Covenant on Civil and Political Rights.[31]

6.24In line with recommendations from the Australian Human Rights Commission, the proposed principle is intended to complement existing laws and rights.[32] It is not an alternative to existing rights to a fair hearing and equality before the law.

6.25Several stakeholders were cautious that the use of AI in Victoria’s courts and VCAT could result in bias and discrimination, which may infringe the right to a fair hearing. Representatives of the Coronial Council noted:

What sits behind the technology and its potential to perpetuate marginalisation and to reflect cultural bias is a concern. It is something we need to be incredibly careful about—ensuring there is not a perpetuation of bias.[33]

6.26As discussed in Chapter 3, the use of AI in courts and tribunals can create bias that can lead to direct or indirect discrimination. The Northern Community Legal Centre was concerned that existing data held by courts is likely to reflect a history of legal and social inequality.[34] It warned that using this data for automated decision making could ‘replicate discriminatory practices rather than moving towards a more equal society’.[35]

6.27AI tools can reproduce, reinforce and exacerbate bias, such as racial inequalities.[36]

6.28Even where discrimination is not intended, it has been documented that AI systems can lead to indirect discrimination because:

seemingly neutral data points that indirectly correlate with protected characteristics can lead to discriminatory outcomes. For example, the use of proxies like postal codes or spending habits, which may seem neutral but indirectly reflect characteristics such as ethnicity or socio-economic status, may result in biased decisions.[37]

6.29Monash University’s Castan Centre highlighted that courts and judicial officers should consider access to justice and equality obligations under the Charter when implementing AI for court users, especially for self-represented litigants. Judges have an obligation to ensure self-represented parties, particularly those with a protected attribute such as a disability, can participate in a fair hearing. The Castan Centre said this includes being aware of the ‘risks of automation bias … for court users with protected attributes in respect of whom particular obligations around equality and non-discrimination arise’. [38]

6.30The Australian Human Rights Commission raised similar concerns about the risks of automation bias ‘for individuals from a consumer to a government level’.[39] It stated that ‘Algorithmic decision-making may also impede on independent decision making due to a tendency to over rely on the outcomes produced by AI.’[40]

6.31It may be difficult to detect bias due to challenges explaining or understanding how an AI system operates (‘black box’ phenomena), coupled with a lack of transparency. If judges do not, or cannot, know how an AI system functions, this can impact their judicial role, and their duty to be impartial and unbiased.[41]

6.32To address these risks, stakeholders recommended that when procuring, developing, implementing, or using AI systems, Victoria’s courts and VCAT should:

•understand the risk of bias and aim to ensure any data used to train AI systems is ethically sourced, relevant and free from discriminatory patterns that could lead to unfair or unjust outcomes[42]

•consider jurisdictional relevance and ask whether data is relevant to the Victorian context[43]

•co-design and develop automated decision-making systems with court users from marginalised backgrounds and the services who work with them[44]

•where relevant, trial AI tools with people with strong to moderate intellectual disabilities[45]

•take reasonable steps to mitigate any identified bias[46]

•prohibit AI systems that perpetuate systemic biases or discriminatory outcomes.[47]

6.33Recommendations directed to court users included that:

•lawyers and experts using AI should be aware of potential bias and ensure AI systems are not used in legal matters where this could lead to discriminatory outcomes[48]

•lawyers should undertake training on ethics, disclosure requirements, and potential biases in AI tools.[49]

6.34Increasingly, international human rights bodies are focused on mechanisms to protect individuals from biased and discriminatory applications of AI. Bias and discrimination should be addressed by:

•promoting AI systems that advance, protect and preserve linguistic and cultural diversity, taking into account multilingualism in their training data and throughout the lifecycle of the AI system, particularly for large language models[50]

•analysing and mitigating bias encoded in datasets and combating algorithmic discrimination and bias[51]

•taking reasonable efforts to minimise and avoid AI systems that reinforce or perpetuate discriminatory or biased applications and outcomes[52]

•ensuring AI systems are available and accessible to all, taking into consideration specific needs in relation to age, culture, language, disabilities, gender, and people who may be disadvantaged or marginalised.[53]

6.35To respond to this feedback, Principle 1 has been updated to highlight that users of AI should take reasonable steps to minimise and avoid discriminatory or biased outcomes. Details on how this principle could be implemented are set out in Chapter 7 for court users, Chapter 8 for judicial officers and Chapter 9 for courts and VCAT.

Principle 2: Accountability and independence

The use of AI by courts and tribunals must not compromise judicial independence and the right to be heard by an independent and impartial court or tribunal. Courts and tribunals should document clear lines of accountability for the development and use of AI.

Users of AI in courts and tribunals remain responsible for their work and should take reasonable steps to independently verify the accuracy and suitability of information provided by an AI system before relying upon it. This includes court users, judicial officers and court and tribunal staff.

Accountability

6.36Stakeholder feedback on this principle highlighted a need to capture accountability for Victoria’s courts and VCAT in their use of AI in court processes and decision making. Stakeholders stated court and tribunal users should be accountable for their own uses of AI.[54]

6.37Stakeholder feedback strongly supported the proposition that Victoria’s courts and VCAT need to be accountable to maintain trust in the integrity of the administration of justice.[55] The Supreme Court acknowledged that:

Governance and accountability are important considerations when implementing new technologies in courts … The court sees benefit in a governance and accountability structure that is capable of being applied to a breadth of AI technologies.[56]

6.38The need for reliable governance and accountability processes was highlighted as critical throughout the development and use of AI systems.[57] Some stakeholders suggested that that one aspect of accountability was the process for choosing (or not choosing) a specific AI tool. The Victorian Equal Opportunity and Human Rights Commission stated it may be worth thinking about the process for holding people accountable for choosing one tool over another.[58] Similarly, the Supreme Court asserted that the principles should consider:

risks associated with not adopting the technology, such as existing risks of human error and bias, cybersecurity risks if new technologies are not adopted, and risks in terms of access to justice if potential efficiencies are not realised.[59]

6.39A key theme from consultations was that Victoria’s courts and VCAT should establish clear lines of accountability for decision making on the use of AI as part of governance arrangements. Accountability is a feature of international court and tribunal guidance. The Scottish Courts and Tribunals Service identifies accountability as a guiding principle for their use of AI and commits to having ‘appropriate governance in place to demonstrate the importance we place on our use of AI—considering its wider impacts and effects. The approval of new systems, ownership of live systems and accountability for their performance is clear’.[60]

6.40Principle 2 has been updated to refer to accountability by courts and tribunals. Methods to support governance of the use of AI by Victoria’s courts and VCAT, such as documenting accountability for decision making, is discussed in Chapter 9. Accountability is supported by the principles of transparency and contestability, as well as oversight and monitoring throughout the AI lifecycle. This is discussed further below.

6.41The principle also highlights that individuals must take reasonable steps to verify the accuracy and suitability of any AI-generated information relied on for use in courts or tribunals. Accuracy considerations to help users verify AI outputs are considered in Chapter 7 for court users, Chapter 8 for judicial officers and Chapter 9 for court and tribunal staff.

Judicial independence

6.42Judicial independence is tied to the right to a fair hearing, as people have the right to have their matter decided by a ‘competent, independent and impartial court or tribunal’.[61] Judicial independence requires ‘that a judge be, and be seen to be, independent of all sources of power or influence in society’.[62]

6.43Several stakeholders highlighted that AI must not infringe upon judicial independence.[63] The Human Rights Law Centre recommended that ‘judicial officers must retain ultimate authority and discretion over AI system decisions.’[64] Additionally, the Federation of Community Legal Centres and Justice Connect recommended that the principle be updated to reflect that:

Courts and tribunals retain their judicial independence and ultimate decision-making authority. AI can assist, but the responsibility for final rulings remains with judges, magistrates and tribunal members, who are accountable for their decisions.[65]

6.44There is also concern that if courts and tribunals use AI tools, particularly for decision-making, this may ‘introduce significant threats to judicial independence, both institutional and personal’.[66] Courts and tribunals internationally have identified judicial independence in principles for managing AI. The Federal Court of Canada states: ‘The Court will ensure its uses of AI do not undermine judicial independence.’[67]

6.45To protect judicial independence, stakeholders recommended that the use of AI for judicial decision-making should be prohibited. How this prohibition could be framed is discussed in Chapter 8.

6.46Principle 2 has been updated to reflect stakeholder feedback to include the importance of maintaining judicial independence.[68]

Principle 3: Transparency and open justice

The use of AI by courts and tribunals must be consistent with open justice.

Courts and tribunals should be transparent about their use of AI and ensure relevant information about AI systems is accessible.

Court users should be prepared to disclose their use of AI if asked to do so by a court or tribunal.

6.47Transparency on the use of AI by Victoria’s courts and VCAT is critical to upholding the principle of open justice and can support public trust in the administration of justice.

6.48As discussed in Chapter 3, transparency is one of the most common principles contained in national and international AI regulatory approaches. It is also captured in the Australian Government’s AI Ethics Principles.[69]

6.49The Open Courts Act 2013 (Vic) requires Victoria’s courts and VCAT to operate openly and transparently in alignment with the principle of open justice unless there are specific circumstances justifying displacement of this principle.

6.50Transparency was identified as ‘essential to ensuring that judicial AI systems comply with human rights standards’.[70] International human rights bodies have outlined that transparency is an essential precondition to respect and protect human rights.[71] The benefits of transparency include an increase in public scrutiny that ‘can decrease corruption and discrimination, and can also help detect and prevent negative impacts on human rights’.[72]

6.51There was strong support from stakeholders for this principle. Victoria Legal Aid commented:

We are particularly supportive of principle 3 which addressed the need for transparency and disclosure of AI use to those who may be affected by a decision reached using AI. This proposed principle ensures consistency with the fundamental common law principle of open justice and supports meaningful oversight and explainability.[73]

6.52To support transparency, stakeholders recommended that Victoria’s courts and VCAT consult on and disclose their use of AI.[74] The principle of transparency is common across several international AI guidelines and policies.[75] The Scottish Courts and Tribunals Service has a principle of transparency and states that it will ‘communicate clearly whenever AI is used and be publicly transparent about the purpose, capabilities and limitations of any AI systems we develop or use. We will provide clear information on how systems have been developed.’[76]

6.53In Chapter 9, we discuss how Victoria’s courts and VCAT can adopt processes to uphold transparency and open justice. Mechanisms considered include community consultation and the publication of an inventory of AI tools developed, purchased or deployed by Victoria’s courts and VCAT.

6.54However, the Centre for the Future of the Legal Profession and UNSW Law and Justice highlighted that because of the complexity of AI systems, transparency alone may be insufficient. They identified a need for ‘interpretable, trustworthy or explainable AI’.[77] Explainability is also important for contestability and procedural fairness discussed below.

6.55We also heard mixed views about whether court users should be encouraged or required to disclose their use of AI to prepare materials for Victoria’s courts and VCAT. In Chapter 7 we discuss the opportunities and challenges of disclosure for court users when using AI to prepare court documents.

Principle 4: Contestability and procedural fairness

The use of AI to make or materially influence decisions by courts and tribunals should not undermine people’s rights to challenge decisions. If courts and tribunals use AI to make or materially influence a decision they should notify anyone whose rights are significantly affected by that decision. Notification should include clear information to enable a person to understand the decision. Courts and tribunals should provide processes to enable human oversight of decisions made or materially influenced by AI.

The use of AI in courts and tribunals should be consistent with the right to procedural fairness. Courts and tribunals should uphold people’s right to assess and challenge AI evidence or outputs.

AI decision making by courts and tribunals

6.56This principle aims to ensure that the use of AI by courts and tribunals does not undermine people’s rights to challenge decisions. As noted in the principle of contestability in Australia’s AI Ethics Principles, ‘Knowing that redress for harm is possible, when things go wrong, is key to ensuring public trust in AI.’[78] To maintain public trust, courts and tribunals should ensure the incorporation of AI does not prevent people from exercising their rights to challenge decisions that significantly affect them.

6.57In Chapter 8, it is recommended that AI should not be used for judicial decision-making. Because of the risks of bias and lack of explainability associated with AI tools (discussed in Chapter 3), courts and tribunals should exercise caution when considering using AI for decisions about administrative matters, such as automated case listings. In Chapter 2 we provided an example of an AI powered case allocation system used in Poland which has faced legal challenge due to concerns around opacity, errors and the potential for manipulation.[79]

6.58There are a range of existing ways people can challenge decisions by courts and tribunals.[80]

6.59However, courts and tribunals should be cautious that introducing AI could negatively impact people’s ability to challenge decisions. People’s ability to challenge a decision could be harder if:

•people do not know AI was used to make or materially influence a decision

•there is no ability to understand how an AI tool has arrived at a decision (due to the complexity or opacity of the AI tool, or because the AI provider refuses to disclose information about its data or algorithms)

•there is no human oversight of a decision.

6.60When considering how courts and tribunals could safely incorporate AI decision-making tools we heard from stakeholders that:

•Courts or tribunals should not use AI systems which are unable to provide explanation or where the providers of such systems resist doing so for commercial purposes.[81]

•Courts and tribunals should explain how AI systems are used in ways that court users can understand, using simple terms to explain its capabilities and limitations.[82]

•Anyone affected by a decision should have a readily accessible means of obtaining sufficient information to enable them to query or challenge that decision.[83]

•Courts and tribunals should incorporate human oversight over AI-driven decision-making processes.[84]

6.61To respond to stakeholder feedback, Principle 4 has been updated to reflect that Victoria’s courts and VCAT should ensure people’s rights to challenge decisions are not undermined by:

a)notifying people whose rights are significantly affected by a decision made or materially influenced by AI. Notification should include clear and understandable information to allow people to understand the decision.

b)ensuring there is human oversight of decisions made or materially influenced by AI. The extent of oversight will depend on the context.

6.62In Chapter 9 we discuss how Principle 4 can be implemented by Victoria’s courts and VCAT in governance processes.

Assessing and contesting AI evidence

6.63Procedural fairness, or natural justice, is commonly understood to involve:

•the right to a decision free of bias

•an appropriate opportunity to be heard.[85]

6.64Procedural fairness may also involve an opportunity for parties to challenge evidence against them.[86]

6.65The risk of bias, lack of robust testing and validation and a lack of transparency because of complexity and proprietary interests may hinder a court, tribunal or parties’ ability to assess or contest evidence.[87]

6.66The Law Commission of Ontario has provided examples of the complex statistical, technical and legal issues associated with AI tools which people may need to consider. This includes:

•data used to train the AI system and whether it is biased, accurate, reliable or valid

•variables and whether they are weighed and calculated appropriately

•the code

•operations or outputs and whether they are understandable

•the accuracy of the system and whether testing and validation of the system has occurred and is appropriate.[88]

6.67The Law Commission of Ontario notes that being able to understand and challenge the above considerations would create significant difficulties even for the best resourced litigants, and these issues would be significantly worse for under- or unrepresented litigants.[89]

6.68Principle 4 has been updated to reflect that courts and tribunals must uphold people’s right to assess and challenge AI evidence or outputs.

6.69In Chapter 5 we discussed methods that could be used to support the assessment of evidence generated by AI tools. This included:

•the Supreme Court’s Practice Note Expert Evidence in Criminal Trials which provides some options to enable courts to explore issues of reliability and question the intricacies of expert evidence on AI tools

•resources for judicial officers to support them to question AI evidence

•court powers to enable court appointed experts, single joint experts, and concurrent evidence[90] and to:

–direct expert witnesses to hold a conference of experts and/or prepare a joint experts report[91]

–refer a question to a special referee[92]

–call in the assistance of one or more specially qualified assessors.[93]

Principle 5: Privacy and data security

AI systems should be developed and used in courts and tribunals with respect for people’s privacy rights. This requires careful collection, use, storage and disposal of data. It also requires appropriate data protection, governance and management throughout the lifecycle of any AI used by courts and tribunals.

To protect privacy and data security, non-public information should not be entered into public AI tools by courts and tribunals.

6.70Public trust requires confidence that Victoria’s courts and VCAT will protect privacy rights in their use of AI tools.

6.71Many stakeholders raised privacy and information security concerns about the use of AI in Victoria’s courts and VCAT.[94] In Chapter 3 we discuss these risks and outline how different types of AI systems can carry different privacy and information security risks.

6.72In Australia, the legal system operates in various ways to protect confidentiality and privacy. This includes through general law principles, contractual rights and legislation such as the Privacy Act 1988 (Cth). In Victoria, privacy rights are further protected by the Charter,[95] and the Privacy and Data Protection Act 2014 (Vic). The protection of privacy rights in Victorian legislation is consistent with the recognition of privacy rights at international law.[96]

6.73In Chapter 5 we discuss how privacy rights apply to Victoria’s courts and VCAT. While Victoria’s courts and tribunals are exempt from some privacy obligations, we were told they aim to follow state and national privacy regimes, and consider data security issues, where they are not incompatible with other court obligations.

6.74Stakeholders recommended a range of options to support the protection of privacy where AI systems are used by Victoria’s courts and VCAT. Options and processes to protect privacy rights could include:

•prohibiting non-public information from being entered into public AI tools[97]

•undertaking due diligence when procuring an AI tool to ask questions about the foundation model used and training data, (for example: Was data lawfully obtained? What has been done to treat the training data? Has personal information been removed?)[98]

•undertaking privacy impact assessments to understand how closed AI tools will be used and consider risks and mitigations[99]

•assessing AI tools for data security risks before use, ensuring compliance with Australian privacy laws[100]

•developing and implementing clear guidance and training on the use of AI tools[101]

•acting consistently with Victorian and Australian Government guidance on the use of AI, unless a deviation is demonstrated as necessary[102]

•implementing strong data governance frameworks, anonymisation techniques, data minimisation and robust consent protocols[103]

•implementing data protection measures to comply with privacy laws and safeguard sensitive legal information[104]

•developing an incident response plan for dealing with inadvertent disclosures or misuse of information.[105]

6.75AI systems used by Victoria’s courts and tribunals will require ongoing surveillance and contract management to ensure continuing compliance with these protections.

6.76International human rights bodies have developed guidance on the risks AI poses to privacy rights. The United Nations Human Rights Council has recognised that the use of emerging technologies can have considerable adverse effects to individuals’ privacy rights.[106] To address these risks, UNESCO has recommended that ‘privacy should be respected, protected and promoted throughout the lifecycle of AI systems’ through a range of mechanisms including privacy by design principles, privacy impact assessments and the establishment of appropriate data security safeguards and policies.[107]

6.77Courts and tribunals internationally have embedded data security and privacy into their AI guidelines and policies. The Federal Court of Canda has a principle of cybersecurity which states that: ‘The Court will store and manage its data in a secure technological environment that protects the confidentiality, privacy, provenance, and purpose of the data managed.’[108]

6.78In Chapter 5, we considered policy approaches to support privacy and data governance in Victoria’s courts and VCAT. Principle 5 has been updated to refer to privacy rights and describes the need for limited uses of public AI systems, which should not be used for inputting sensitive information.

6.79More generally, lawyers and other court users need to consider privacy and data security when using AI systems. For lawyers, particular caution is needed to ensure confidential client data is protected.

Principle 6: Access to justice

The use of AI by courts and tribunals should support access to justice. Courts and tribunals should ensure the use of AI does not create or exacerbate barriers or inequalities, such as a lack of access to technology.

Courts and tribunals should use tools that are accessible to all individuals and do not exclude those from marginalised and disadvantaged backgrounds.

The use of AI systems by courts and tribunals should complement and not replace the option for court users to access human supports pathways.

The use of AI by courts and tribunals should be consistent with Indigenous Data Sovereignty rights.

6.80Stakeholders recognised that the use of AI in Victoria’s courts and VCAT presents both opportunities and risks for people’s access to justice. These benefits and risks are considered in Chapter 3. Access to justice is inherently tied to the human right to equality before the law, as discussed above in Principle 1: impartiality and fairness.[109]

6.81We heard that Victoria’s courts and VCAT should consider how AI can be used to enhance the accessibility of justice services.[110] The Federation of Community Legal Centres and Justice Connect recommended that AI implementation projects should be resourced ‘to support Victorian courts, tribunals and legal services to develop and deploy AI tools that will improve user access to information and justice’.[111]

6.82Stakeholders highlighted that it was important for courts to consider the impact on access to justice when implementing AI systems. Stakeholders also made recommendations to expand on Principle 6 by:

•recognising that AI can create new inequalities and contribute to the digital divide[112]

•incorporating considerations of Indigenous Data Sovereignty[113]

•recognising risks if new technologies are not adopted, and risks if potential efficiencies are not realised.[114]

6.83In Chapter 3 we discuss how the use of AI by Victoria’s courts and VCAT can create and intensify access to justice challenges for individuals with limited access to technology or lower digital literacy. This is often referred to as the ‘digital divide’. Suggestions were made by stakeholders to address this risk by:

•clearly disclosing use of AI systems and ensuring there is always a choice to interact with a human[115]

•providing training and accessible resources to help individuals develop the digital skills needed to use AI-powered legal tools effectively.[116]

6.84We heard that AI should be used in Victoria’s courts and VCAT in a way that complements, rather than replaces, human supports. This recourse to court support staff is a vital point of connection where people are unable to access or use technology.

6.85Access to justice has been incorporated into international AI approaches by courts and tribunals. Spain’s Policy on the use of artificial intelligence in the administration of justice has a principle of equity and universal access which requires that:

AI in the Administration of Justice must be used to guarantee equitable access to judicial systems, regardless of location, socioeconomic status or any other demographic characteristic. This involves developing solutions that remove barriers and ensure that all citizens have the same opportunity to assert their rights before the law.[117]

6.86Principle 6 has been updated to recognise that Victoria’s courts and VCAT should be aware of and seek to avoid creating or exacerbating access to technology barriers amongst courts users. The principle reflects that court users should retain an option to interact with a human. This ensures courts and tribunal services remain accessible to everyone. Opportunities for public education and training are considered in Chapter 10.

6.87Principle 6 has also been updated to reference Indigenous Data Sovereignty, as recommended by representatives of community legal centres who stated:

The Victorian Government is committed to implementing Indigenous data governance and sovereignty through the Yoorrook treaty process. The discussion of principles should include considerations of Indigenous Data Sovereignty.[118]

6.88The Victorian Government has committed to embedding Indigenous Data Sovereignty principles.[119] Indigenous Data Sovereignty has been defined by the Maiam nayri Wingara Indigenous Data Sovereignty Collective as:

The right of Indigenous people to exercise ownership over Indigenous Data. Ownership of data can be expressed through the creation, collection, access, analysis, interpretation, management, dissemination and reuse of Indigenous Data.[120]

6.89Indigenous Data Governance is defined as:

The right of Indigenous peoples to autonomously decide what, how and why Indigenous Data are collected, accessed and used. It ensures that data on or about Indigenous peoples reflects our priorities, values, cultures, worldviews and diversity.[121]

6.90The Australian Government’s Framework for Governance of Indigenous Data relies on the definitions and principles for Indigenous Data Sovereignty developed by the Maiam nayri Wingara Indigenous Data Sovereignty Collective.[122] Indigenous data includes information and knowledge ‘which is about and may affect Indigenous peoples both collectively and individually.’[123]

6.91The CSIRO provided feedback to the Australian Government that, when considering how AI is to be regulated, ‘understanding about how First Nations data and knowledge can be used to train AI systems is required’.[124] The CSIRO said that ‘the development of Indigenous Data Protocols is essential for the ethical use of data and creation of AI’.[125]

6.92It is important for Victoria’s courts and VCAT to consider Indigenous Data Sovereignty rights when developing data governance and management frameworks for any AI system. This could include seeking indigenous expertise and leadership to guide, test and validate potential AI systems. Representatives from Victoria Legal Aid told us that:

The principles of Indigenous Data Sovereignty and self-determination should be at the centre of any initiative that involves data about First Nations people, communities, or cultural practices. These aren’t just theoretical concepts. They reflect the fundamental right of First Nations people to control how their data is collected, used, interpreted, and shared … This is especially critical in a justice context. There’s a long and painful history of data being used in ways that have harmed, misrepresented, and disempowered First Nations people … We can’t ignore that history.[126]

Principle 7: Efficiency and effectiveness

The use of AI by courts and tribunals should contribute to improved and increased provision of court and tribunal services. The use of AI can support efficient, timely and cost-effective administration of justice but must not detract from fundamental objectives of a just and fair process.

Effective AI tools should contribute to improved processes, services or outcomes for individuals interacting with the justice system.

Assessments of efficiency should consider direct and indirect societal and environmental impacts, across the AI lifecycle.

6.93Victoria’s courts and VCAT have existing legislative obligations to operate in a ‘just, efficient, timely and cost-effective’ manner in civil matters.[127] The right to timely justice in criminal matters is protected under the Charter as people have the right to have criminal matters heard without ‘unreasonable delay’.[128]

6.94Greater efficiency can assist in minimising delays and improving timely access to justice. AI could provide efficiency benefits for Victoria’s courts and court users, given performance data indicates that Victoria has court backlogs in some areas.[129] The Canadian Judicial Council stated that:

Backlogs in court scheduling represent a significant access to justice problem. Canadian courts are investigating and will be experimenting with the use of AI to improve efficiency in case management, alternative dispute resolution and other internal and public-facing areas. However, potential efficiencies require a broad analysis and need to be balanced with other considerations.[130]

6.95We heard that as part of expanding Principle 7, efficiency should incorporate consideration of the broader costs of AI, such as the environmental impact of AI systems.[131] The direct and indirect environmental impacts of AI are significant and arise across the AI lifecycle.[132] Training and running AI models can use a significant amount of energy and water and create greenhouse gas emissions.[133]

6.96We also heard from stakeholders that the proposed principle of ‘efficiency’ in our consultation paper should be broadened and include consideration of efficacy or effectiveness. ‘Effectiveness’ is commonly defined as ‘producing the intended or expected result’.[134] Applied to Victoria’s justice system, the intended outcome identified by the Supreme Court is ‘to serve the community by upholding the law through just, independent and impartial decision making and dispute resolution’.[135]

6.97Stakeholders told us that Principle 7 should be broader than consideration of costs. Rather, it should focus on how AI systems can contribute to improvements for individuals interacting with Victoria’s courts and VCAT and better outcomes for Victoria’s justice system.[136]

6.98This approach aligns with the Scottish Courts and Tribunals Service AI policy which incorporates the principle of ‘public good’ and states that AI will only be used ‘where it is of benefit to our service users, staff or members of the judiciary’.[137] We also heard that where efficiencies are realised, savings should be invested back into the court system to increase access and improve services.[138]

6.99This shift allows for the impacts of AI systems on court users to be considered in a broad context, rather than AI systems being assessed only against time and cost savings. This approach is complementary to the principle of access to justice, as discussed above.

6.100It was recommended that one way for Victoria’s courts and VCAT to achieve this is to implement human-centred design.[139] The Federation of Community Legal Centres and Justice Connect recommended that Victoria’s courts and VCAT should ensure AI tools ‘are designed with accessibility in mind to enhance public trust and engagement’.[140]

6.101We heard that environmental impacts should be considered when courts and tribunals are making decisions about designing, developing, deploying or procuring AI.[141] If environmental impacts are identified, court and tribunals should consider strategies to reduce environmental impacts.[142] Principle 7 has been updated to incorporate this feedback and include consideration of environmental impacts of AI systems in Victoria’s courts and VCAT.

Principle 8: Human oversight and monitoring

Courts and tribunals must implement appropriate human oversight as a check on their use of AI systems to address risks relating to AI, including bias, reliability and accuracy. Human oversight should include evaluation and testing before use and ongoing monitoring, assessment and compliance of AI systems after implementation. The level of human oversight required will vary depending on the use and risks of AI in specific contexts.

6.102Appropriate human oversight and monitoring can help safeguard the implementation and management of AI use in Victoria’s courts and VCAT. Many of these concerns around AI implementation were considered in Chapter 3.

6.103We heard from stakeholders that while AI can be useful it does need to be verified by humans.

6.104Internationally courts and tribunals have included human oversight in their AI use policies and guidelines. The Federal Court of Canada’s Interim Principles and Guidelines on the Court’s Use of Artificial Intelligence[143] includes the principle ‘human in the loop’ and the European Ethical Charter on the Use of Artificial Intelligence in Judicial Systems and their Environment[144] has a guiding principle of ‘under user control’.

6.105Several stakeholders recommended ongoing oversight, monitoring and evaluation processes. We heard that:

•Continuous monitoring and periodic review of AI systems must be established to ensure courts and tribunals adapt to evolving legal, societal, and technological contexts.[145]

•The level of governance (consultation, testing, training, reporting and oversight) may vary depending on the technology being implemented.[146]

•Governance arrangements and frameworks should include data governance and infrastructure necessary to monitor and evaluate the impacts of the use of AI in court and tribunal settings.[147]

•Independent auditing mechanisms must be established to regularly review AI systems for compliance with the Charter and international human rights standards.[148]

•Human oversight and review are necessary to maintain judicial oversight over AI-driven decision-making processes, prevent unjust outcomes and allow for contestability.[149]

•Courts and tribunals should regularly monitor and evaluate AI systems to identify risks, measure effectiveness and make necessary improvements.[150]

6.106Principle 8 has been updated to ensure a focus on ongoing monitoring and evaluation of AI systems across all AI lifecycle stages.

6.107In Chapter 9 we discuss how Victoria’s courts and VCAT can implement appropriate oversight and monitoring arrangements through good AI governance practices. We explore mechanisms for establishing human oversight bodies and implementing an AI assurance framework to be applied across the AI lifecycle.

6.108The idea of human oversight and monitoring is also relevant to individuals. As discussed under Principle 2, we heard that court users should maintain oversight of AI outputs used or relied on in courts.[151]

6.109Principle 8 recognises that the level of human oversight required will depend on various factors. This approach is adopted in interjurisdictional court and tribunal polices.[152] The level of oversight necessary is considered separately in Chapter 7 for court users, Chapter 8 for judicial officers and in Chapter 9 for Victoria’s courts and VCAT.

|

Recommendation 4.The Commission’s principles should be adopted and applied where relevant by Victoria’s courts and VCAT when making decisions about the use of AI. The individual principles should be employed to achieve the overarching objective of maintaining public trust. |

-

Some examples include: ‘Australia’s AI Ethics Principles’, Department of Industry, Science and Resources (Web Page, 11 October 2024) <https://www.industry.gov.au/publications/australias-artificial-intelligence-ethics-principles/australias-ai-ethics-principles>; AI Forum New Zealand, Trustworthy AI in Aotearoa: AI Principles (Report, March 2020) <https://aiforum.org.nz/wp-content/uploads/2020/03/Trustworthy-AI-in-Aotearoa-March-2020.pdf>; AI Verify, ‘AI Verify: AI Governance Testing Framework and Toolkit’, Personal Data Protection Commission, Singapore (Web Page, 25 May 2022) <https://www.pdpc.gov.sg/news-and-events/announcements/2022/05/launch-of-ai-verify—an-ai-governance-testing-framework-and-toolkit>; Council of Europe, Council of Europe Framework Convention on Artificial Intelligence and Human Rights, Democracy and the Rule of Law, opened for signature 5 September 2024, CETS No. 225 <https://www.coe.int/en/web/artificial-intelligence/the-framework-convention-on-artificial-intelligence>.

-

Felicity Bell et al, AI Decision-Making and the Courts: A Guide for Judges, Tribunal Members and Court Administrators (Report, Australasian Institute of Judicial Administration, December 2023) 30–40.

-

Submissions 4 (Coroners Court of Victoria), 5 (Office of the Victorian Information Commissioner), 12 (Victoria Legal Aid), 16 (Law Institute Victoria), 17 (Office of Public Prosecutions), 23 (Victorian Bar Association), 26 (Supreme Court of Victoria). Consultations 2 (Coroners Court of Victoria), 5 (Victorian Bar Association), 7 (Judicial College of Victoria), 10 (Coronial Council of Victoria), 13 (Federal Circuit and Family Court of Australia), 15 (Magistrates’ Court of Victoria), 32 (Supreme Court of Victoria).

-

Submissions 10 (Castan Centre for Human Rights Law, Monash University), 27 (Federation of Community Legal Centres and Justice Connect). Confidential submission 26. Consultations 4 (Victorian Legal Services Board and Commissioner), 8 (Federation of Community Legal Centres Workshop), 22 (Court Services Victoria), 27 (UNSW’s Centre for the Future of the Legal Profession and Professor Lyria Bennett Moses).

-

Submission 23 (Victorian Bar Association). Consultation 23 (Dr Fabian Horton).

-

Consultation 32 (Supreme Court of Victoria).

-

Submission 16 (Law Institute Victoria).

-

Submission 22 (Centre for the Future of the Legal Profession and UNSW Law and Justice).

-

Except for one stakeholder who considered legislation could play a role in future in clearly articulating rules about permissible and prohibited uses of AI by the judiciary: Consultation 34 (Human Technology Institute).

-

Submissions 10 (Castan Centre for Human Rights Law, Monash University), 27 (Federation of Community Legal Centres and Justice Connect).

-

Submissions 10 (Castan Centre for Human Rights Law, Monash University), 15 (Human Rights Law Centre), 18 (Northern Community Legal Centre), 27 (Federation of Community Legal Centres and Justice Connect). Consultations 31 (Victorian Equal Opportunity & Human Rights Commission), 35 (Victoria Legal Aid).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect). Consultation 8 (Federation of Community Legal Centres Workshop) 7. This aligns with the approach of submissions to the Commonwealth Department of Industry, Science and Resources’ consultation on ‘Introducing mandatory guardrails for AI in high-risk settings’. See, for example, submissions from the National Environmental Law Association, Human Technology Law Centre (School of Law, Queensland University of Technology), and ARC Centre of Excellence on Automated Decision-Making and Society: ‘Introducing Mandatory Guardrails for AI in High-Risk Settings: Proposals Paper – Published Responses’, Department of Industry, Science and Resources (Cth) (Web Page) <https://consult.industry.gov.au/ai-mandatory-guardrails/submission/list>.

-

Consultations 4 (Victorian Legal Services Board and Commissioner), 8 (Federation of Community Legal Centres Workshop). See also submissions from First Nations Digital Inclusion Advisory Group, DLC Legal, Australian Securities and Investments Commission, Human Technology Institute, CSIRO, and AI Asia Pacific Institute to the Department of Industry, Science and Resources’ consultation ‘Introducing Mandatory Guardrails for AI in High-Risk Settings: Proposals Paper – Published Responses’, Department of Industry, Science and Resources (Cth) (Web Page) <https://consult.industry.gov.au/ai-mandatory-guardrails/submission/list>.

-

Submission 6 (Victorian Legal Services Board and Commissioner).

-

Ibid. Consultation 4 (Victorian Legal Services Board and Commissioner).

-

Consultations 2 (Coroners Court of Victoria), 9 (Victorian Civil and Administrative Tribunal), 11 (Law Institute of Victoria), 13 (Federal Circuit and Family Court of Australia), 31 (Victorian Equal Opportunity & Human Rights Commission).

-

Submission 12 (Victoria Legal Aid). Consultation 28 (Monash University Digital Law Group).

-

Submission 20 (Deakin Law Clinic).

-

Submission 22 (Centre for the Future of the Legal Profession and UNSW Law and Justice).

-

Submission 26 (Supreme Court of Victoria).

-

Consultation 34 (Human Technology Institute).

-

Consultation 22 (Court Services Victoria).

-

Submission 22 (Centre for the Future of the Legal Profession and UNSW Law and Justice).

-

For an analysis of AI and international human rights norms, see Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) [6]-[11] <https://docs.un.org/en/A/80/169>.

-

Submission 10 (Castan Centre for Human Rights Law, Monash University).

-

European Commission for the Efficiency of Justice (CEPEJ), European Ethical Charter on the Use of Artificial Intelligence in Judicial Systems and Their Environment (2019, adopted at the 31st plenary meeting of the CEPEJ, Strasbourg, 3-4 December 2018); United Nations Educational, Scientific and Cultural Organization (UNESCO), Draft Guidelines for the Use of AI Systems in Courts and Tribunals (Guidelines, May 2025) 12 <https://unesdoc.unesco.org/ark:/48223/pf0000393682>; ‘Interim Principles and Guidelines on the Court’s Use of Artificial Intelligence’, Federal Court of Canada (Guidelines, 20 December 2023) <https://www.fct-cf.gc.ca/en/pages/law-and-practice/artificial-intelligence>; Minister of the Presidency, Justice and Relations with the Courts (Spain), Policy on the Use of Artificial Intelligence in the Administration of Justice (Policy, 2024) 4 <https://www.mjusticia.gob.es/es/JusticiaEspana/ProyectosTransformacionJusticia/Documents/Spains_Policy_on_the_use_of_AI_in_the_Justice_Administration.pdf>; Victoria Police, Victoria Police Artificial Intelligence Ethics Framework (Policy, 20 March 2024) <https://www.police.vic.gov.au/victoria-police-artificial-intelligence-ethics-framework>.

-

Australia New Zealand Policing Advisory Agency (ANZPAA), Australia New Zealand Responsible and Ethical Artificial Intelligence Framework (Report, 22 July 2025) 3 <https://www.anzpaa.org.au/products/products/australia-new-zealand-responsible-and-ethical-artificial-intelligence-framework>.

-

Charter of Human Rights and Responsibilities Act 2006 (Vic).

-

Ibid s 24(1).

-

Ibid s 8(3).

-

United Nations, International Covenant on Civil and Political Rights, GA Res 2200A (XXI) (23 March 1976, adopted and opened for signature 16 December 1966) art 14 <https://www.ohchr.org/en/instruments-mechanisms/instruments/international-covenant-civil-and-political-rights>.

-

Sophie Farthing et al, Human Rights and Technology (Final Report, Australian Human Rights Commission, 2021) 88 <https://humanrights.gov.au/our-work/technology-and-human-rights/projects/final-report-human-rights-and-technology>.

-

Consultation 10 (Coronial Council of Victoria).

-

Submission 18 (Northern Community Legal Centre).

-

Ibid.

-

Tendayi Achiume, Special Rapporteur, Racial Discrimination and Emerging Digital Technologies: A Human Rights Analysis: Report of the Special Rapporteur on Contemporary Forms of Racism, Racial Discrimination, Xenophobia and Related Intolerance, UN Doc A/HRC/44/57 (18 June 2020) [7], [28] <https://documents.un.org/doc/undoc/gen/g20/151/06/pdf/g2015106.pdf>.

-

Council of Europe Steering Committee for Human Rights (CDDH) and Drafting Group on Human Rights and Artificial Intelligence (CDDH-IA), [DRAFT] Handbook on Human Rights and Artificial Intelligence: Chapters I and III (CDDH-IA(2025)1, 17 January 2024) 11.

-

Submission 10 (Castan Centre for Human Rights Law, Monash University).

-

Australian Human Rights Commission, Adopting AI in Australia (Submission No. 71 to Senate Select Committee on Adopting Artificial Intelligence (AI), 15 May 2024) 7 <https://humanrights.gov.au/our-work/legal/submission/adopting-ai-australia>.

-

Ibid.

-

Monika Zalnieriute, Technology and the Courts: Artificial Intelligence and Judicial Impartiality (Submission No. 3 to Australian Law Reform Commission Review of Judicial Impartiality, June 2021) 5–6 <https://www.alrc.gov.au/wp-content/uploads/2021/06/3-.-Monika-Zalnieriute-Public.pdf>.

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Consultation 31 (Victorian Equal Opportunity & Human Rights Commission).

-

Submission 18 (Northern Community Legal Centre).

-

Consultation 24 (Victorian Advocacy League for Individuals with Disability).

-

Submission 6 (Victorian Legal Services Board and Commissioner).

-

Submission 15 (Human Rights Law Centre).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Submission 20 (Deakin Law Clinic).

-

United Nations, Seizing the Opportunities of Safe, Secure and Trustworthy Artificial Intelligence Systems for Sustainable Development, UN Doc A/78/L.49 (11 March 2024) 6 [6(m)] <https://docs.un.org/A/78/L.49>.

-

Ibid [6(h)].

-

United Nations Educational, Scientific and Cultural Organization (UNESCO), Recommendation on the Ethics of Artificial Intelligence (2022, adopted on 23 Nov 2021) 20 <https://unesdoc.unesco.org/ark:/48223/pf0000381137>.

-

Ibid.

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Submissions 5 (Office of the Victorian Information Commissioner), 27 (Federation of Community Legal Centres and Justice Connect).

-

Submission 26 (Supreme Court of Victoria).

-

Submission 15 (Human Rights Law Centre).

-

Consultation 31 (Victorian Equal Opportunity & Human Rights Commission).

-

Submission 26 (Supreme Court of Victoria).

-

Scottish Courts and Tribunals Service, Scottish Courts and Tribunals Service: Our Approach to the Development of Services Using Artificial Intelligence (Policy, April 2025) 3 <https://www.scotcourts.gov.uk/media/xalno3ff/scts-ai-policy.pdf>.

-

Charter of Human Rights and Responsibilities Act 2006 (Vic) s 24.

-

Australian Institute of Judicial Administration (AIJA), Guide to Judicial Conduct, Third Edition (Revised) (Guide, December 2023) 7 <https://aija.org.au/wp-content/uploads/2024/04/Judicial-Conduct-guide_revised-Dec-2023-formatting-edits-applied.pdf>.

-

Submission 15 (Human Rights Law Centre).

-

Ibid.

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Daniel Escott, FIJIT: Integrating Judicial Independence and Technology (Manuscript, Osgoode Hall Law School, York University, 2025) 8.

-

‘Interim Principles and Guidelines on the Court’s Use of Artificial Intelligence’, Federal Court of Canada (Guidelines, 20 December 2023) 2 <https://www.fct-cf.gc.ca/en/pages/law-and-practice/artificial-intelligence>.

-

Submission 24 (County Court of Victoria).

-

‘Australia’s AI Ethics Principles’, Department of Industry, Science and Resources (Web Page, 11 October 2024) <https://www.industry.gov.au/publications/australias-artificial-intelligence-ethics-principles/australias-ai-ethics-principles>; See also Organisation for Economic Co-operation and Development (OECD), Recommendation of the Council on Artificial Intelligence, OECD/LEGAL/0449, 3 May 2024, 8–9 <https://legalinstruments.oecd.org/en/instruments/OECD-LEGAL-0449>.

-

Submission 15 (Human Rights Law Centre).

-

United Nations Educational, Scientific and Cultural Organization (UNESCO), Recommendation on the Ethics of Artificial Intelligence (2022, adopted on 23 Nov 2021) 22 <https://unesdoc.unesco.org/ark:/48223/pf0000381137>.

-

Ibid.

-

Submission 12 (Victoria Legal Aid).

-

Submissions 12 (Victoria Legal Aid), 15 (Human Rights Law Centre), 16 (Law Institute Victoria); Submission 18 (Northern Community Legal Centre).

-

Courts and Tribunals Judiciary (UK), Artificial Intelligence (AI) Guidance for Judicial Office Holders (Guidance, 14 April 2025) <https://www.judiciary.uk/wp-content/uploads/2025/04/Refreshed-AI-Guidance-published-version.pdf>; European Commission for the Efficiency of Justice (CEPEJ), European Ethical Charter on the Use of Artificial Intelligence in Judicial Systems and Their Environment (2019, adopted at the 31st plenary meeting of the CEPEJ, Strasbourg, 3-4 December 2018) 59–61; ‘Interim Principles and Guidelines on the Court’s Use of Artificial Intelligence’, Federal Court of Canada (Guidelines, 20 December 2023) 2 <https://www.fct-cf.gc.ca/en/pages/law-and-practice/artificial-intelligence>; Minister of the Presidency, Justice and Relations with the Courts (Spain), Policy on the Use of Artificial Intelligence in the Administration of Justice (Policy, 2024) 4 <https://www.mjusticia.gob.es/es/JusticiaEspana/ProyectosTransformacionJusticia/Documents/Spains_Policy_on_the_use_of_AI_in_the_Justice_Administration.pdf>; United Nations Educational, Scientific and Cultural Organization (UNESCO), Draft Guidelines for the Use of AI Systems in Courts and Tribunals (Guidelines, May 2025) 18 <https://unesdoc.unesco.org/ark:/48223/pf0000393682>.

-

Scottish Courts and Tribunals Service, Scottish Courts and Tribunals Service: Our Approach to the Development of Services Using Artificial Intelligence (Policy, April 2025) 2 <https://www.scotcourts.gov.uk/media/xalno3ff/scts-ai-policy.pdf>.

-

Submission 22 (Centre for the Future of the Legal Profession and UNSW Law and Justice).

-

‘Australia’s AI Ethics Principles’, Department of Industry, Science and Resources (Web Page, 11 October 2024) <https://www.industry.gov.au/publications/australias-artificial-intelligence-ethics-principles/australias-ai-ethics-principles>.

-

See for example Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 12 <https://docs.un.org/en/A/80/169>.

-

For example, the Supreme Court has a common law and statutory jurisdiction to undertake judicial review of administrative decisions. Administrative Law Act 1978 (Vic) ss 3, 7; Supreme Court (General Civil Procedure) Rules 2015 (Vic) ord 56.

-

Submission 22 (Centre for the Future of the Legal Profession and UNSW Law and Justice).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Submission 22 (Centre for the Future of the Legal Profession and UNSW Law and Justice).

-

Submissions 15 (Human Rights Law Centre), 27 (Federation of Community Legal Centres and Justice Connect).

-

Plaintiff S157/2002 v Commonwealth [2003] HCA 2; (2003) 211 CLR 476, [25].

-

Felicity Bell et al, AI Decision-Making and the Courts: A Guide for Judges, Tribunal Members and Court Administrators (Report, Australasian Institute of Judicial Administration, December 2023) 54.

-

Paul W Grimm, Maura R Grossman and Gordon V Cormack, ‘Artificial Intelligence as Evidence’ (2021) 19(1) Northwestern Journal of Technology and Intellectual Property 9, 94, 98.

-

Law Commission of Ontario, Accountable AI (LCO Final Report, June 2022) 38 <https://www.lco-cdo.org/wp-content/uploads/2022/06/Accountable-AI-reduced-size.pdf>.

-

Ibid.

-

Civil Procedure Act 2010 (Vic) ss 65K, 65L, 65M.

-

Ibid s 65I; Supreme Court (General Civil Procedure) Rules 2025 (Vic) ord 44.06; Victorian Civil and Administrative Tribunal Act 1998 (Vic) sch 3; Submission 26 (Supreme Court of Victoria).

-

Supreme Court (General Civil Procedure) Rules 2025 (Vic) ord 50.01; Victorian Civil and Administrative Tribunal Act 1998 (Vic) s 95; Submission 26 (Supreme Court of Victoria).

-

Supreme Court Act 1986 (Vic) s 77; Submission 26 (Supreme Court of Victoria).

-

Submissions 2 (Assoc Prof Marcus Smith), 7 (Dr Natalia Antolak-Saper), 10 (Castan Centre for Human Rights Law, Monash University), 15 (Human Rights Law Centre), 27 (Federation of Community Legal Centres and Justice Connect). Consultation 31 (Victorian Equal Opportunity & Human Rights Commission).

-

Charter of Human Rights and Responsibilities Act 2006 (Vic) s 13.

-

United Nations, International Covenant on Civil and Political Rights, GA Res 2200A (XXI) (23 March 1976, adopted and opened for signature 16 December 1966) art 17 <https://www.ohchr.org/en/instruments-mechanisms/instruments/international-covenant-civil-and-political-rights>.

-

Submissions 5 (Office of the Victorian Information Commissioner), 7 (Dr Natalia Antolak-Saper), 27 (Federation of Community Legal Centres and Justice Connect).

-

Submission 5 (Office of the Victorian Information Commissioner).

-

Ibid; Consultation 25 (Microsoft).

-

Submissions 5 (Office of the Victorian Information Commissioner), 27 (Federation of Community Legal Centres and Justice Connect).

-

Submission 5 (Office of the Victorian Information Commissioner).

-

Ibid.

-

Submission 15 (Human Rights Law Centre).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Submission 5 (Office of the Victorian Information Commissioner).

-

Ana Brian Nougreres, Special Rapporteur, Legal Safeguards for Personal Data Protection and Privacy in the Digital Age: Report of the Special Rapporteur on the Right to Privacy, Ana Brian Nougreres, UN Doc A/HRC/55/46 (18 January 2024) [76] <https://docs.un.org/en/A/HRC/55/46>.

-

United Nations Educational, Scientific and Cultural Organization (UNESCO), Recommendation on the Ethics of Artificial Intelligence (2022, adopted on 23 Nov 2021) 29 <https://unesdoc.unesco.org/ark:/48223/pf0000381137>.

-

‘Interim Principles and Guidelines on the Court’s Use of Artificial Intelligence’, Federal Court of Canada (Guidelines, 20 December 2023) 2 <https://www.fct-cf.gc.ca/en/pages/law-and-practice/artificial-intelligence>.

-

See also Margaret Satterthwaite, Special Rapporteur, AI in Judicial Systems: Promises and Pitfalls: Report of the Special Rapporteur on the Independence of Judges and Lawyers, Margaret Satterthwaite, UN Doc A/80/169 (16 July 2025) 4–5 <https://docs.un.org/en/A/80/169>.

-

Submission 17 (Office of Public Prosecutions).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Consultation 31 (Victorian Equal Opportunity & Human Rights Commission).

-

Consultations 8 (Federation of Community Legal Centres Workshop), 35 (Victoria Legal Aid).

-

Submission 26 (Supreme Court of Victoria).

-

Consultation 30 (Eastern Community Legal Centre).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Minister of the Presidency, Justice and Relations with the Courts (Spain), Policy on the Use of Artificial Intelligence in the Administration of Justice (Policy, 2024) 5 <https://www.mjusticia.gob.es/es/JusticiaEspana/ProyectosTransformacionJusticia/Documents/Spains_Policy_on_the_use_of_AI_in_the_Justice_Administration.pdf>.

-

Consultation 8 (Federation of Community Legal Centres Workshop).

-

Victorian Government, Aboriginal Affairs Report 2023 (Report, 2024) 7, 25 <https://www.firstpeoplesrelations.vic.gov.au/sites/default/files/2024-07/VIC-GOV_Aboriginal-Affairs-Report_2023.pdf>.

-

‘Definitions’, Maiam Nayri Wingara (Web Page) ‘Indigenous Data Sovreignty’ <https://www.maiamnayriwingara.org/definitions>.

-

Ibid ‘Indigenous Data Governance’.

-

Australian Government, Framework for Governance of Indigenous Data: Practical Guidance for the Australian Public Service (Report, May 2024) 7.

-

‘Definitions’, Maiam Nayri Wingara (Web Page) ‘Indigenous Data’ <https://www.maiamnayriwingara.org/definitions>.

-

CSIRO, Introducing Mandatory Guardrails for AI in High-Risk Settings: Proposals Paper (Submission to the Department of Industry, Science and Resources Introducing Mandatory Guardrails for AI in High-Risk Settings: Proposals Paper, October 2024) <https://consult.industry.gov.au/ai-mandatory-guardrails/submission/view/41>.

-

Ibid.

-

Consultation 35 (Victoria Legal Aid).

-

Civil Procedure Act 2010 (Vic) s 7(1).

-

Charter of Human Rights and Responsibilities Act 2006 (Vic) s 25(2)(c).

-

For example, the 2025 Report on Government Services, looking at data for the 2023-24 period, indicates that the backlog for criminal matters in the Supreme and County courts are relatively high compared to most other jurisdictions, for matters more than 12 months old (noting that court backlog and timeliness of case processing can be affected by a number of factors, some of which may not be due to court delay) Steering Committee for the Review of Government Service Provision, Report on Government Services 2025: Justice (Part C) (Report, Productivity Commission, 2025) 73.

-

Canadian Judicial Council, Guidelines for the Use of Artificial Intelligence in Canadian Courts (Guidelines, September 2024) 8 <https://cjc-ccm.ca/sites/default/files/documents/2024/AI%20Guidelines%20-%20FINAL%20-%202024-09%20-%20EN.pdf>.

-

Consultation 8 (Federation of Community Legal Centres Workshop).

-

Senate Select Committee on Adopting Artificial Intelligence (AI), Parliament of Australia, Report of the Select Committee on Adopting Artificial Intelligence (AI) (Final Report, November 2024) 143–48 <https://www.aph.gov.au/Parliamentary_Business/Committees/Senate/Adopting_Artificial_Intelligence_AI/AdoptingAI/Report>.

-

Organisation for Economic Co-operation and Development (OECD), Measuring the Environmental Impacts of Artificial Intelligence Compute and Applications: The AI Footprint (OECD Digital Economy Papers No 341, 15 November 2022) <https://www.oecd.org/en/publications/measuring-the-environmental-impacts-of-artificial-intelligence-compute-and-applications_7babf571-en.html>.

-

Macquarie Dictionary Online (Web Page, 2025) (online at 24 September 2025) <https://app-macquariedictionary-com-au.eu1.proxy.openathens.net/?search_word_type=dictionary&word=effectiveness> ‘effective’.

-

‘2020 – 2025 Strategic Statement’, Supreme Court of Victoria (Web Page, 2025) <http://www.supremecourt.vic.gov.au/about-the-court/how-the-court-works/strategic-statement>.

-

Submissions 6 (Victorian Legal Services Board and Commissioner), 27 (Federation of Community Legal Centres and Justice Connect). Consultation 8 (Federation of Community Legal Centres Workshop).

-

Scottish Courts and Tribunals Service, Scottish Courts and Tribunals Service: Our Approach to the Development of Services Using Artificial Intelligence (Policy, April 2025) 2 <https://www.scotcourts.gov.uk/media/xalno3ff/scts-ai-policy.pdf>.

-

Consultation 4 (Victorian Legal Services Board and Commissioner).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect). Consultation 31 (Victorian Equal Opportunity & Human Rights Commission).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Ibid. Consultation 5 (Victorian Bar Association).

-

Department of Industry Science and Resources (Cth) and National Artificial Intelligence Centre (NAIC), AI and ESG: An Introductory Guide for ESG Practitioners (Report, October 2024) 21 <https://www.industry.gov.au/sites/default/files/2024-10/ai-and-esg-an-introductory-guide-for-esg-practitioners.pdf>.

-

‘Interim Principles and Guidelines on the Court’s Use of Artificial Intelligence’, Federal Court of Canada (Guidelines, 20 December 2023) 2 <https://www.fct-cf.gc.ca/en/pages/law-and-practice/artificial-intelligence>.

-

European Commission for the Efficiency of Justice (CEPEJ), European Ethical Charter on the Use of Artificial Intelligence in Judicial Systems and Their Environment (2019, adopted at the 31st plenary meeting of the CEPEJ, Strasbourg, 3-4 December 2018) 7.

-

Submission 15 (Human Rights Law Centre).

-

Submission 26 (Supreme Court of Victoria).

-

Submission 10 (Castan Centre for Human Rights Law, Monash University).

-

Submission 15 (Human Rights Law Centre).

-

Submission 27 (Federation of Community Legal Centres and Justice Connect).

-

Ibid.

-

Ibid.

-

Office of the Commissioner for Federal Judicial Affairs Canada, Action Committee on Modernizing Court Operations, Use of Artificial Intelligence by Courts to Enhance Court Operations (Statement, 20 November 2024) 3 <https://fja-cmf.gc.ca/COVID-19/pdf/Use-of-AI-by-Courts-Utilisation-de-lIA-par-les-tribunaux-eng.pdf>.

|

|