9. Governance to support AI innovation

Overview

•The growth of AI use in courts and tribunals requires effective governance to support safe use and public trust.

•There are opportunities to improve AI governance in Victoria’s courts and VCAT to reduce risks and embrace opportunities for innovation.

•This chapter recommends AI governance components, which together may enhance the safe use of AI in Victoria’s courts and VCAT. This includes:

–AI governance bodies with multidisciplinary and multijurisdictional representation and documented roles and responsibilities to facilitate coordination and consistency

–an AI policy to document principled guidelines to court and tribunal staff on the safe use of AI and disclosure and consultation processes

–an AI assurance framework to assess risks and the suitability of potential AI uses.

Why do courts and tribunals need AI governance?

9.1Our terms of reference ask us how to guide the safe use of AI in Victoria’s courts and tribunals while maintaining public trust and ensuring integrity and fairness in the court system.

9.2There are many definitions of governance. One understanding of governance relates to putting constraints around the exercise of power.[1] We are interested in governance as it relates to courts, VCAT and Court Servies Victoria putting controls around decision making about AI use.

9.3AI governance is comprised of several components. These components include an ‘organisational structure, policies, processes, regulation, roles, responsibilities and risk management framework’.[2]

9.4Appropriate governance can help safeguard the implementation and active management of AI across its lifecycle in Victoria’s courts and VCAT.

9.5As discussed in Chapter 3, the use of AI raises new risks and challenges. In some of Victoria’s courts and in VCAT, governance processes have been adapted to respond to these risks and opportunities.

9.6Although there is no one-size-fits-all AI approach for Victoria’s courts, a coherent governance approach can support consistent understanding and enable innovative approaches to be shared.

9.7We heard about the need for governance to ensure the safe use of AI in courts and tribunals and address key risks associated with AI.[3] We heard that the complexity and evolving nature of AI requires a governance approach that facilitates continual monitoring and review. This can ensure AI use adapts to evolving legal, societal and technological contexts.[4]

9.8We were told that appropriate governance was essential to ensure the use of AI in courts and tribunals did not negatively impact human rights.[5] We also heard governance is critical to mitigate security and data privacy concerns related to AI use.[6]

9.9From our consultations, other benefits of implementing AI governance include increased:

•transparency and public trust in the administration of justice[7]

•coordination across jurisdictions and increased sharing of resources and information, as well as reduced risk of duplicated effort and inconsistency[8]

•education and awareness across the organisation on the risks, benefits and safe use of AI[9]

•capacity to comply with existing legislative obligations.[10]

9.10In this chapter, we discuss governance components which together could help support the safe use of AI in Victoria’s courts and VCAT. This involves updating governance bodies, allocating roles and responsibilities, implementing an AI policy and an AI assurance framework.

9.11Guidelines for the use of AI by judicial officers are recommended in Chapter 8. In this chapter, the Commission recommends that guidelines for court and tribunal staff should be included in an AI policy for Victoria’s courts and VCAT.

Governance arrangements in Victoria’s courts and VCAT

9.12Each court jurisdiction and VCAT operate independently of each other. The head of each jurisdiction (for example, the Chief Justice) is given legislative powers relating to the ‘business of the Court’.[11] Each jurisdiction:

•has its own internal governance structure

•has a Chief Executive Officer who manages its staff and administration services (appointed by Courts Council)

•develops their own strategic plan to reflect their own priorities.[12]

9.13Some of Victoria’s courts and VCAT have adapted their internal governance structures or created new governance bodies to consider risks, opportunities and potential AI use cases (as shown in Table 18). For this report, an ‘AI use case’ refers to the use of AI that is designed, developed, deployed or procured to support official work of Victoria’s courts or VCAT. This may either be standalone, or part of a wider solution.

Table 18: AI governance bodies in Victoria’s courts and VCAT.

|

Jurisdiction |

Governance body |

|---|---|

|

Supreme Court |

The Digital Strategy Steering Committee has a role in considering AI risks, opportunities and use cases within the court. |

|

County Court |

The Technology Advisory Committee advises the Chief Judge and is focused on the judicial experience of technology in the court process. This Committee is responsible for considering AI use cases, for example it reviewed the court’s speech-to-text AI pilot.[13] |

|

Magistrates’ Court |

The Magistrates’ Court is actively engaged on the CSV AI Working Group (see paragraph [9.24]). Existing internal governance and administrative forums also provide a role in developing Magistrates’ Court jurisdiction policies and actions before they are referred to the judiciary.[14] |

|

Coroners Court |

An informal AI working group consisting of lawyers and coroners has been set up. This may become a formal governance body to monitor and review AI issues and operate similarly to the Court’s existing Research Committee. The Coroners Court’s Executive Team and Risk Committee has current oversight and governance in exploring AI use cases.[15] |

|

VCAT |

An AI Committee was established in early 2024 and comprises representatives from VCAT’s members, strategic and operational staff. It identifies potential AI use cases and assesses benefits and limitations. It also makes recommendations for the effective, ethical, and lawful use of AI and suggests policy changes and guidelines.[16] |

Court Services Victoria governance

9.14Court Services Victoria (CSV) is an independent statutory body.[17] It provides and coordinates independent administrative services and facilities to Victoria’s Courts Group. The Courts Group is made up of the six court jurisdictions, the Judicial College of Victoria and the Judicial Commission of Victoria.

9.15CSV provides administrative support to the jurisdictions. One of CSV’s functions is to provide information and communication technology services. CSV’s Digital Group supports jurisdictions with general technology needs and in implementing and updating technology.[18]

9.16CSV is required to comply with the Victorian Government Risk Management Framework, which outlines minimum risk management requirements.[19] CSV is responsible for actively managing risks related to its own corporate services and for coordinating and managing an ‘organisational risk management plan for risks that affect the whole of Courts Group’.[20]

9.17The arrangement Victoria’s courts have with CSV is unique. In most other Australian jurisdictions administrative court services sit within government departments.[21] Until 2014, the then Victorian Department of Justice delivered court administrative and technology services to the courts.[22]

9.18We heard that courts were interested in applying their own analysis when considering potential AI tools rather than simply implementing tools adopted by other government departments.[23] CSV can provide an independent courts-specific assessment of proposed AI tools.

9.19There are several bodies within CSV that play a role in supporting the safe use of AI in administrative arrangements.

Courts Council

9.20Courts Council is the governing body of CSV. It directs CSV’s strategy, governance and risk management. It is chaired by the Chief Justice and consists of the six heads of jurisdiction and has non-judicial independent representation.[24] The Council has a role in ‘the implementation of AI systems across the court system’.[25]

Audit and Risk Committee

9.21The Audit and Risk Committee is a subcommittee of Courts Council. It consists of ‘Council representatives, members of the judiciary and an independent external specialist with expertise in ICT’.[26]

9.22This Committee supports Council’s capacity for informed decision-making on AI. Part of its role is to maintain risk management and accountability.

9.23The Committee is responsible for reviewing ‘Organisational Risk Profiles’. CSV intend to establish an Organisational Risk Profile for AI.[27] This would mean that causes and controls for AI-specific risks are documented and monitored. The Committee also monitors the Courts Group Digital Risk Register which aims to include ‘shared AI related risks across the Courts Group’.[28]

AI Working Group

9.24In response to the challenges and opportunities of AI, CSV established an AI Working Group.[29]

9.25The AI Working Group is comprised of members from each jurisdiction and CSV staff. There are 17 members primarily from business transformation or information technology digital services functions. However, it includes a tribunal member of VCAT’s Planning and Environment list.[30]

9.26CSV describes the AI Working Group as the ‘central coordination point for AI initiatives across CSV’.[31] Some of its responsibilities are:

•managing and monitoring AI risks on the Courts Group Digital Risk Register

•developing frameworks, principles, evaluating AI tools and assessing information security requirements

•monitoring pilot projects, ensuring adherence to ethical and regulatory requirements

•facilitating knowledge-sharing and providing guidance on best practice

•developing guidelines (including for lawyers) and practice notes.

9.27CSV also has working groups focused on information security and data management.[32]

AI Proof of Concept Lab

9.28CSV is developing an AI Proof of Concept Lab to test potential AI use cases. CSV stated that the lab will be a controlled environment established separately from their existing network and will only use test data to prove AI use cases.[33]

9.29CSV intend to pilot AI use cases on a small scale in closed environments before scaling them up and submitting them to user testing or applying them to development environments.[34]

9.30The use of secure environments to develop AI tools is sometimes referred to as a ‘development sandbox’.[35] Its aim is to provide a secure environment to test AI while minimising privacy and data security risks. Development sandboxes can involve testing and developing AI tools with:

•clean data sources (such as anonymised or pseudonymised data to ensure data protection and confidentiality)

•technical protections (such as a one-way data gate so that data can go in but not out of the sandbox)

•controls on access (ensuring suitable access controls and audit logs, and ensuring that if third parties have access to the sandbox, they are subject to appropriate data protection and confidentiality clauses).[36]

9.31The UN Special Rapporteur on the independence of judges and lawyers has recommended that judicial systems institute ‘sandbox environments to pilot AI programs and experiment with appropriate regulations’.[37]

9.32Peak national and international AI standards emphasise the importance of rigorous pre- and post-deployment testing to identify errors, risks and limitations of AI tools and to test and monitor whether the tool is serving its intended purpose.[38] These standards also encourage the development of clearly defined metrics and criteria to monitor the performance of the tool.[39]

9.33Australia’s National Framework for the Assurance of Artificial Intelligence in Government states that small scale pilots should be used to evaluate AI tools, to identify and mitigate problems before tools are scaled up.[40] However, the framework notes that a balance is needed because testing tools in highly controlled environments may not accurately reflect the full risk and opportunities. While testing in less controlled environments may pose governance challenges.

9.34Internationally, courts have highlighted the importance of piloting AI tools. The Office of the Commissioner for Federal Judicial Affairs Canada encourages courts to trial multiple tools simultaneously and under different conditions to determine which will best suit their needs. It also encourages courts to pilot tools to troubleshoot issues before launching them.[41] In the United States (US), the National Centre for State Courts has made an AI sandbox available to court staff to allow them to ‘practice with GenAI in an end environment where your data will not be used to train commercial models.’[42]

9.35From our consultations we heard that several of Victoria’s courts and VCAT have implemented AI pilots.[43] Victoria’s courts, VCAT and CSV should use secure environments to pilot and test tools before deployment and implement ongoing testing and monitoring to help ensure AI tools continue to operate correctly and serve their intended purpose.

International AI governance structures in courts and tribunals

9.36Other jurisdictions have implemented a range of governance structures to support the safe use of AI within their judicial systems. These may be helpful to Victoria’s courts and VCAT.

9.37Many of these approaches bring judicial and administrative sides of the courts together to form a coordinated response to AI usage and implementation. Some examples of international AI governance features are considered in Table 19.

Table 19: International court and tribunal AI governance features

|

Jurisdiction |

AI governance features |

|---|---|

|

New Zealand |

Digital strategy: In New Zealand the executive arm of government, via the Ministry of Justice, delivers IT services to courts and tribunals. The Courts of New Zealand developed a digital strategy which details how the judiciary and the New Zealand Ministry of Justice work together on technology.[44] The strategy contains the judiciary’s objectives and guiding principles for the use of technology within New Zealand courts and tribunals.[45] It identifies investigating and so far, as practical, implementing AI as part of its longer-term aspirations.[46] Technology and innovation judicial role and function: Justice Goddard led the development of the digital strategy and chairs the Information and Digital Technology Committee.[47] In this role, there is time allowed to support implementation of the strategy. The Chair of this committee has responsibility for reporting to the Chief Justice and liaising with the Ministry of Justice and court jurisdictions to consider digital use cases. Multijurisdictional and multidisciplinary body: An Artificial Intelligence Advisory Group was commissioned by the New Zealand Chief Justice to develop AI guidelines.[48] The group is multijurisdictional and multidisciplinary with ‘representatives from the Senior Courts and District Court, judicial support staff, court registries, and the Ministry of Justice’.[49] This group also works with the Heads of Bench Committee and respective tribunal chairs. |

|

Canada |

Multijurisdictional and multidisciplinary body: The Office for the Commissioner for Federal Judicial Affairs established an Action Committee on Modernizing Court Operations which combines members of the judiciary with the executive. It is co-chaired by the Chief Justice of Canada and the Minister of Justice and Attorney-General of Canada.[50] It is supported by a technical working group and produces guidelines and principles for the planning and implementation of AI projects. Canada also has the Canadian Judicial Council, which has released its own AI guidelines.[51] |

|

Singapore |

Technology and innovation judicial role and function: In Singapore one judge is allocated a senior role in charge of ‘Transformation and Innovation in the Judiciary’.[52] The judge sits on a range of business operations committees and reports regularly to the Chief Justice to ensure the Chief Justice is engaged in AI decision making. There is also a Chief Transformation and Innovation Officer.[53] The approach focuses not just on AI but also on judicial innovation. The Chief Justice and other jurisdictional leads can make recommendations to the allocated judge who can then raise them with the administrative arm. Use cases are then developed and budgeted. Where proposals seem to be viable, the Chief Transformation and Innovation Officer may bring these to the attention of the Transformation and Innovation Judge. |

|

England and Wales |

AI Action Plan for Justice: Released in July 2025, the plan sets out the Ministry of Justice’s strategic priorities for AI adoption over three years across courts, tribunals, prisons, probation and supporting services.[54] Multijurisdictional and multidisciplinary body: A cross jurisdictional Judicial AI Advisory Group was established to assist the judiciary on the use of AI.[55] This advisory group helped to develop guidance for the judiciary on the use of AI.[56] In 2024 the Ministry of Justice established the Justice AI Unit, which consists of ‘an interdisciplinary team of AI specialists, designers, technologists, and operational experts working to embed responsible AI across the justice system.’[57] |

Opportunities to strengthen AI governance bodies, roles and responsibilities

9.38While each court jurisdiction and VCAT operate independently, there are opportunities for jurisdictions to collaborate with Courts Council and CSV to ensure the safe use of AI.

9.39The Victorian Auditor-General’s Office has described CSV’s governance structure as complex. One reason for this is because: ‘While each jurisdiction is independent, they work together and depend on each other.’[58]

9.40There are several opportunities to improve the current approach through:

•coordination and consistency

•transparency and accountable decision making

•diversity of skills and expertise

•judicial representation.

Coordination and consistency

9.41We heard that there is value in ensuring a coordinated and consistent response to AI. If courts, VCAT and CSV work in isolation in their approach to AI, this could lead to inconsistency and duplication of resources. The Law Institute of Victoria noted:

The strategy for adopting AI technologies in Victoria’s courts and tribunals should be developed with a view not only to ensuring consistency of regulation with other Australian jurisdictions, but also to avoiding duplication of investment and effort within Victoria. This will be even more important in the current fiscal environment in Victoria, as we often see across the courts and tribunals, each jurisdiction developing their own technology solution, for example case management systems, rather than looking at how best to leverage technologies across all jurisdictions.[59]

9.42A lack of coordination reduces opportunities to collaborate, pool resources, pilot and implement innovative approaches and share learnings from pilots. Developing and implementing AI solutions in isolation could also lead to variations in data and privacy security processes.

9.43While different jurisdictions may have differing needs, there will be some AI tools that are applicable across jurisdictions. In Chapter 2 we discussed how the County Court, Magistrates’ Court and VCAT have all undertaken their own AI transcription pilots.

9.44It was recognised that CSV could play a role in promoting consistency across jurisdictions.[60] The County Court said:

To achieve consistency, the most practical approach may be a consultative approach between CSV and the relevant courts and tribunal that respects the independence of the judiciary, while aiming to provide practical assistance to ensure courts are safely utilising AI technology.[61]

9.45The development of the AI Working Group, which has representation from each jurisdiction and has been endorsed by Courts Council, supports a coordinated approach. However, it is unclear how this group will effectively ensure consistency across courts. It is also not clear how AI governance bodies that have been developed across Courts Group and within CSV interact with each other and how roles and responsibilities are allocated.

Transparency and accountability of decision making

9.46It is important that the courts, VCAT and CSV are transparent about who has authority for decisions. AI governance requires a clear chain of responsibility for decision making and accountability.[62]

9.47Many stakeholders supported courts and tribunals adopting transparent measures to communicate the approval and use of AI. The Office of the Victorian Information Commissioner stated: ‘The community expects government organisations to be transparent and accountable, and to publicly report on their use of AI.’[63]

9.48The Supreme Court told us that ‘CSV has responsibility for procurement and maintenance of IT infrastructure.’[64]

9.49While CSV has developed the AI Working Group, this operates at a low level of seniority and has a limited decision-making function. It is not clear how the AI Working Group fits into existing CSV and individual jurisdictional decision-making processes. The terms of reference for the AI Working Group state broadly that the group reports to jurisdictional and CSV executives, as well as jurisdictional Digital/Information Technology Committees.

Diversity of skills and expertise

9.50AI governance requires governance bodies to represent multidisciplinary capabilities and expertise.[65] Because AI can present risks that combine technical, legal and ethical considerations, it is important that diverse perspectives are considered when making decisions about AI.

9.51In many AI governance models, there is a focus on ethical expertise to support decision making. This could include consideration of the unique risks that impact on the judicial function.

9.52Technical and business operations experts might consider some process concerns (such as bias, accuracy, privacy and explainability). But they may lack a broad understanding of human-centred concerns (autonomy, fairness, wellbeing, truth and democratic values). They may also view risks such as bias and explainability from a technical perspective rather than in terms of procedural fairness. AI developments in courts require consideration of AI impacts on individuals, institutions and society, particularly where AI may impact on trust in courts.

9.53The Office of the Victorian Information Commissioner and the Judicial College of Victoria identified the need for multiple skill sets to be brought together when considering decisions about AI.[66] The need for multidisciplinary skills to be reflected in AI governance and decision making was also supported by representatives of Microsoft.[67] The Office of the Victorian Information Commissioner warned:

The accountability and responsibility of implementing, approving or managing AI systems should not fall solely on the IT department or equivalent. Given the breadth and scale of AI applications across the whole organisation, it is advisable to nominate the head of the agency as the responsible and accountable officer for the adoption of AI, with a whole-of-organisation approach taken to identifying and managing the risks involved.[68]

9.54This was further supported by representatives of the Judicial College of Victoria who stated AI governance requires an understanding of judge’s needs, court processes and AI expertise and that ‘normal governance frameworks within courts are unlikely to be well-equipped to deal with AI developments’.[69]

9.55Currently, the AI Working Group consists of members largely from transformation or information technology/digital services functions. Effective AI governance should include representation from multiple skill sets, such as technology specialists, legal, policy and subject domain specialists.

Judicial representation

9.56It is important that judicial officers are involved in decisions about the use of AI in courts and tribunals.

9.57The UN Special Rapporteur on the independence of judges and lawyers has stated that judiciaries should be confronting issues around the use of AI in judicial systems as a matter of priority.[70] They recommended that to preserve judicial independence, ‘decisions about whether to use AI in judicial systems, and which tools to use, should be made by judges’.[71]

9.58In considering how AI may shape the future of the justice system, Professor Tania Sourdin argues that it is crucial for judges to be involved in considering how technological advancements may be adopted in courts.[72] Sourdin states that for judges to be able to provide input on technological advancements:

Judges must not only acquire foundational knowledge and understandings about AI, but they must also consider the implications of its use on both the justice system and the judiciary. As such, judges must have strategies in place to deal with the ethical and other issues raised by Judge AI.[73]

9.59CSV’s role has been legislated to ensure business operations in relation to technology infrastructure are supported. But current governance arrangements do not support the use of AI when specifically directed at the judiciary or external court users.

9.60Some information and technology committees established within different court jurisdictions have been focused on the ‘judicial experience of technology in the court process’.[74] However, CSV’s multijurisdictional AI Working Group does not have broad judicial officer representation. This may be appropriate given the current role of the group. But as a result, it may be unable to reflect the views of the judiciary in respect to the uses, limitations and opportunities for AI in courts and tribunals.

9.61Creating a multijurisdictional body with judicial officer representation will help provide an opportunity to support the needs of the judiciary in relation to the use of AI in courts and tribunals.

Reform options for AI governance bodies across Victoria’s courts and VCAT

9.62The experience of other jurisdictions is useful to inform how AI governance bodies and the allocation of roles and responsibilities could be improved in Victoria’s courts and VCAT.

9.63Options for reform include establishing:

•a technology and innovation committee

•technology and innovation judicial roles and functions.

Establish a technology and innovation committee

9.64Jurisdictions such as Canada and New Zealand have implemented multidisciplinary and multijurisdictional committees to assess technology and AI use in courts and tribunals (as shown in Table 19). These groups also have a role in developing or reviewing AI guidelines.

9.65In the US, the Conference of State Court Administrators recommend that courts establish a taskforce with diverse membership to assist in developing a responsive and flexible institutional framework for the use of GenAI in the court.[75] They recommend such a taskforce should consist of court leaders and be informed by people outside the legal system such as university and industry professionals.

9.66In Australia, the Federal Circuit and Family Court has established an internal AI committee.[76] This committee consists of judicial members, a technical member (the courts head of digital), representatives from the Chief Justice’s office and a senior judicial registrar.

9.67There is currently no specific sub-committee of Courts Council responsible for technology and AI related initiatives. However, CSV is considering establishing a technology committee which would have a focus on AI.[77]

9.68CSV previously had an Information Technology Portfolio Committee, which was a sub-committee reporting to Courts Council.[78] It was merged into the Strategic and Innovative Projects Committee in 2019. Its responsibilities included advising Courts Council on the development and implementation of ‘court facilities and technology related initiatives’.[79] The Strategic and Innovative Project Committee, chaired by the Chief Justice, was multidisciplinary with judicial representation and contained experts from outside of CSV.[80] However it was dissolved in April 2024.[81] CSV advised that the committee was disbanded following the COVID-19 pandemic because of financial constraints.[82]

9.69CSV anticipates that re-implementing a technology committee will support coordination and consistency across the jurisdictions. The committee would consider procurement matters, potential use cases, ethical, financial and contractual issues.[83]

9.70Establishing a technology and innovation committee would address current gaps in the governance for AI if it were set up to be:

•multijurisdictional with representation from each of the jurisdictions

•multidisciplinary and members have appropriate expertise and a foundational understanding of AI

•represented with judicial officers.

9.71It would be useful to consider bringing in external technical expertise as needed. This would help the committee keep up to date on evolving technology risks and opportunities.

9.72It will also be relevant for this committee to be aware of the experiences and concerns of court users. Below (from paragraph [9.134]) we discuss how consultation should be considered in the design and development of AI tools for Victoria’s courts and VCAT. Outcomes of consultations should inform the committee’s decision making on AI use cases.

9.73It will be important for this committee to clearly document accountability and responsibility for decision making. It will also be critical to ensure that there are resources available to support AI governance with appropriate secretariat support.

9.74To meet community expectations for transparency, one option to clearly document responsibilities would be for CSV to make the terms of reference of the committee publicly available. This approach is adopted in Canada, with the terms of reference for the Action Committee on Modernizing Court Operations made available online.[84]

9.75It should be clear in the terms of reference that the committee would be responsible for:

•reporting on AI risks

•making recommendations on AI procurement, potential use cases, ethical and financial issues to Courts Council.

9.76The existing AI Working Group could continue its work as an information sharing forum and report up to the committee.

9.77Because the head of each jurisdiction has legislated responsibilities (as noted above, paragraph [9.12]) for the business of their court, they are responsible for implementing recommendations made by Courts Council in their jurisdiction. This reflects that there are differences in how each of the jurisdictions operates and some AI use cases may not be suitable for every jurisdiction.

9.78Even though each jurisdiction has its own legislated responsibilities this does not negate the benefits and importance of coordination (as discussed in paragraph [9.41]).

9.79The committee should report to the Courts Council, which should actively coordinate responses and identify opportunities for consistency and alignment in Victoria’s courts and VCAT where possible.

Establish technology and innovation judicial roles

9.80To ensure judicial perspectives are incorporated into Victoria’s courts and VCAT responses to AI, lead judicial technology and innovation roles could be created. Sourdin argues there is a ‘need to appoint judges with backgrounds that include sophisticated understandings of new technologies and the time and the ability to design systems that are responsive to judicial and user needs’.[85] Sourdin states this is necessary to ensure judges can adequately participate in the challenges and opportunities raised by technological advancements and to prevent an overreliance on private technological companies or on the executive arm of government.

9.81Some courts have established specialised technology and innovation judicial leads who work with the administrative arm of court services or a government unit that provides technological support to courts. As discussed in Table 19, Singapore’s courts have appointed an ‘Innovation and Technology Judge’. New Zealand also created a specialised role to consider digital technology issues.

9.82In Victoria’s courts and VCAT, lead technology and innovation judicial roles could be created by the head of each jurisdiction appointing a dedicated judicial officer to lead the implementation of AI.

9.83The proposed technology committee requires judicial representation. This could be achieved by having the appointed judicial leads as committee members. This would support a cohesive approach as the judicial lead could coordinate feedback from their jurisdiction and ensure it is factored into a courts-wide approach to AI.

9.84As noted above (in Table 18) several of Victoria’s courts and VCAT already have technology committees in place. These forums will remain important to coordinate feedback within each jurisdiction. It would be useful for judicial leads to bring their jurisdictional perspectives together at the multijurisdictional forum. This can enable a coordinated and, where possible, consistent response to AI usage and implementation.

9.85The judicial responsibilities of the technology and innovation judicial leads would have to be adjusted to ensure that they have sufficient time out of court to fulfill the functions of the AI technology and innovation role.

9.86Other international jurisdictions have developed digital strategies to guide decision making and set direction on the adoption of technology within courts and tribunals.[86] The development of a digital strategy can assist to ensure that AI use cases are strategically developed to consider future court needs.

9.87The development of a Victorian courts digital strategy could be the focus of the technology committee, like the development of New Zealand’s Digital Strategy for Courts and Tribunals.[87]

|

Recommendations 16.A technology and innovation committee should be established by the Courts Council to support ongoing governance of AI across Victoria’s courts and VCAT. 17.Coordination and consistency in AI governance should be promoted by the Courts Council across Victoria’s courts and VCAT. 18.A technology and innovation judicial officer or VCAT member should be appointed by the head of each of Victoria’s court jurisdictions and VCAT to support AI development and innovation. |

Developing an AI policy for courts, tribunals and Court Services Victoria

9.88While a strategy can support ongoing developments, an AI policy can be used to set accepted use, roles and responsibilities and obligations in relation to the use of AI.

9.89The Commission is not aware of CSV or any of Victoria’s courts or VCAT having implemented an AI policy. CSV has implemented some aspects of the Administrative Guideline for the safe and responsible use of Generative Artificial Intelligence in the Victorian Public Sector issued by the Victorian Government.[88]

9.90This guideline is based on Australia’s AI Ethics Principles and sets out minimum requirements for the use of GenAI by Victorian public sector personnel. Some of the requirements are that agency-approved tools are to be used ahead of publicly available GenAI tools. Additionally, personnel can only input publicly available information into GenAI tools that have not been approved, and personnel remain responsible and accountable for their work.[89]

9.91But as the Supreme Court has noted the Victorian Government Administrative Guideline does not apply to courts or CSV.[90]

9.92While not directly relevant to Victoria’s courts, the Australian government’s Policy for the responsible use of AI in government[91] contains principle-based guidance for how non-corporate Commonwealth entities can safely engage with AI. It includes mandatory requirements for entities to designate accountability for implementing the policy to accountable officers. It requires them to make publicly available statements outlining their approach to AI adoption. Additionally, in July 2025, the Australian government released the Technical standard for government’s use of artificial intelligence.[92] It provides technical requirements to support the implementation of the Australian Government’s AI Ethics Principles.

9.93We understand CSV is developing an AI policy that will apply to CSV staff.[93] Representatives of CSV stated it will likely contain high-level statements about the restrictions on staff use of AI.[94]

9.94Internationally, courts and government departments have developed policies about the use of AI in courts and tribunals. Examples of international court AI policies are described in Table 20.

Table 20: International court AI policies

|

Jurisdiction and policy |

Summary of approach |

|---|---|

|

Canada Use of AI by Courts to Enhance Court Operations[95] |

Identifies benefits and challenges of courts use of AI and principles to assist courts to consider how to responsibly use AI. It contains key stages to roll out AI tools, including an initial needs assessment and planning phase focusing on community consultation. It then steps through AI project management phases: •data handling throughout the AI lifecycle •design (identifying the purpose of the tool testing and training and ensuring the technical requirements fit courts systems and structures) •deployment (consideration of trials, pilots, transition plans, training and regular auditing) •decommissioning considerations. |

|

Scotland Our Approach to the Development of Services Using Artificial Intelligence[96] Scottish Courts and Tribunals Service |

The policy sets out the overall approach the Scottish Courts and Tribunals Service takes to the development and use of AI. It contains seven guiding principles to ensure the use of AI is ethical and beneficial. It provides for governance and oversight through a hierarchy of control across different governance bodies. It also makes commitments to training, development, monitoring and review. It specifies that contracts with suppliers will include clauses that specify ethical AI use and compliance with relevant laws and standards such as data protection and privacy. |

|

Spain Policy on the use of AI in the Administration of Justice[97] Ministry of Justice and Court Relations |

Incorporates the European ethical charter on the use of Artificial Intelligence in judicial systems and their environment’s five ethical principles.[98] It contains rules for the use of AI in the administration of justice and creates obligations to set responsibility for AI use, development, implementation, quality control and auditing. It also contains examples of AI uses that are: •prohibited •require the approval of IT •require management’s approval •generally permitted. |

|

United States (Arizona) Code of Judicial Administration[99] Arizona Supreme Court |

The Code of Judicial Administration was updated to include a chapter on the Use of Generative Artificial Intelligence Technology and Large Language Models. It applies to all court personnel and lists considerations for judicial leaders when determining whether to permit the use of GenAI. It also contains rules for staff which restrict staff from inputting public content into non approved AI systems. It sets out that the administrative director must keep a list of GenAI tools that are: •approved for all purposes •approved for public content only •approved for non-production use only •prohibited. The document also provides direction on court developed tools. |

|

United States (California) Judicial Branch Administration: Rule and Standard for Use of Generative Artificial Intelligence in Court-Related Work[100] Judicial Council of California |

The Judicial Council of California agreed that any court that does not prohibit the use of GenAI by court staff or judicial officers ‘must adopt a policy that applies to the use of GenAI by court staff for any purpose and by judicial officers for any task outside their adjudicative role’.[101] The Judicial Council of California has specified what must be contained in AI court polices which includes direction on responsible use and disclosure. |

|

United States (Connecticut) Artificial Intelligence Responsible Use Framework[102] State of Connecticut Judicial Branch |

The policy includes guiding principles and information on AI across intake and exploration, impact assessment, procurement and implementation phases. It sets out the terms of reference for the Judicial Branch’s Artificial Intelligence Committee. It contains operating procedures on: •determination characteristics—to determine whether a system employs AI for decision-making •intake and inventory—to conduct an annual inventory of all systems that employ AI used by the branch •impact assessment—to categorise AI systems into risk categories •procurement and due diligence processes—to procure AI tools. |

|

United States (various courts) |

Several other US courts have introduced AI policies for court employees, such as the Supreme Courts in South Dakota[103] and Illinois.[104] The Illinois Supreme Court Policy on Artificial Intelligence directs that the use of AI by court staff ‘may be expected, should not be discouraged, and is authorized provided it complies with legal and ethical standards’.[105] |

9.95International AI policies provide examples that are helpful in considering the scope of an AI policy for CSV and Victoria’s courts and tribunals.

9.96As outlined in Chapter 4, peak standards organisations have released directions on AI governance. While not specific to courts, we heard that international standards could play an important role in shaping public trust in AI governance within courts and tribunals by setting a benchmark of acceptable practices.[106]

9.97These peak bodies direct organisations to develop and document a policy for the development and use of AI to:

a)ensure the use of the AI system is consistent with an organisation’s stated values and principles

b)define key terms and concepts and the scope of their purposes and intended uses

c)align AI governance to broader security, safety, privacy and data governance policies and practices, particularly the use of sensitive or otherwise risky data.[107]

9.98They also encourage organisations to:

a)establish a documentation inventory system

b)establish processes about public disclosure of AI use

c)implement external stakeholder consultation and engagement processes.[108]

9.99Based on these standards and stakeholder feedback, this chapter goes on to suggest key elements to be included in an AI policy for CSV, and Victoria’s courts and VCAT being:

•information security and data privacy processes

•principled guidance for use of AI by CSV and court and tribunal staff

•disclosure and consultation processes.

Alignment of AI use to information security, privacy and data management

9.100Court users and the public need to be able to trust that Victoria’s courts and VCAT can maintain the security and privacy of data. Professor Lyria Bennett Moses has commented: ‘The security of AI systems used by courts is … essential both from a practical standpoint and for the purpose of institutional and public confidence.’[109]

9.101International standards highlight that AI policies should refer to and align with an organisation’s existing privacy and data governance processes and policies.[110] This is consistent with AI policies developed by courts.

9.102In Canada the Office of the Commissioner for Federal Judicial Affairs has set out that appropriate data privacy and cybersecurity measures are needed to guide the use of AI by courts and that:

A strong data privacy and cybersecurity framework, including a clear protocol in the event of a breach, can mitigate risks associated with using an AI tool to store or process any sensitive information handled by courts. Consideration should be given to how AI-related policies or protocols fit within existing frameworks for information management and information technology.[111]

9.103In Chapter 5 it is recommended that Victoria’s courts and VCAT update existing privacy policies and develop AI policies to state how they seek to be consistent with the Victorian Information Privacy Principles (IPPs). In Chapter 5 we also refer to guidance released by the Office of the Victorian Information Commissioner on the use of AI tools.[112] This guidance may be helpful for Victoria’s courts and VCAT to consider how they can align with the IPPs.

9.104We also suggest that Victoria’s courts and VCAT should consider a privacy by design approach to court data (as defined in Chapter 3).[113] Victoria’s courts and VCAT should also consider:

•implementing robust security controls (including physical security, cybersecurity and insider threat safeguards across the AI lifecycle).[114]

•implementing processes and documenting how teams will support the management and protection of data usage rights for AI (including intellectual property, Indigenous Data Sovereignty, privacy, confidentiality and contractual rights).[115]

9.105In Chapter 3, we highlight that organisations need to consider the physical location of where data is stored and whether the use of an AI tool will result in information travelling outside of Victoria.

Principled guidance for use of AI by CSV and court and tribunal staff

9.106As highlighted in Table 19, many international court AI policies contain rules or guidance to staff about acceptable uses of AI.

9.107The Conference of State Court Administrators in the United States advised that to best achieve time and labour savings, court staff need to be provided with guidelines on what is acceptable AI use and what processes should be followed.[116]

9.108The Supreme Court noted existing duties on court and tribunal staff in relation to privacy and confidentiality:

CSV employees’ terms of employment include duties relating to confidentiality, which is reinforced in various ways. There are also CSV IT [information technology] policies that apply to Court staff, and CSV provides information to staff regarding the use of AI.[117]

9.109While there are general information technology policy requirements in place, many people supported the development of principled guidelines about the use of AI by court and tribunal staff.[118]

9.110As discussed in Chapter 6, the Commission’s principles could help to guide safe use of AI in Victoria’s courts and tribunals. An AI policy could serve an educative function and connect the principles to relevant considerations for CSV, court and tribunal staff.

9.111Guidance to CSV and court and tribunal staff should be separate to guidance for judicial officers which is discussed in Chapter 8. This is because judicial officers have different roles and responsibilities to court and tribunal staff.

9.112Table 21 provides examples of international court policies to demonstrate how the Commission’s principles could help guide the use of AI by CSV, court and VCAT staff.

Table 21: Examples of principle-based guidance for staff

|

Principle |

Guidance for staff |

|---|---|

|

Impartiality and fairness |

•Court staff ‘must thoroughly review all material to ensure it contains neither overt prejudice nor subtle bias.’[119] •‘Use AI consistently with core values and ethical rules … promote AI tools that are accessible to all individuals, including those with disabilities, and that they do not inadvertently exclude any segments of the population or inadvertently perpetuate bias against anyone, including marginalized groups.’[120] |

|

Accountability and independence |

•‘Any use of GenAI output is ultimately the responsibility of the Authorized User. Authorized Users are responsible to ensure the accuracy of all work product and must use caution when relying on the output of GenAI.’[121] •‘Always verify AI-generated content before use. Generative AI can sometimes generate false information and the output should not be relied on without verification. While AI can be used as a starting point, the output should never be used verbatim in the completion of reports/documents for the Court’.[122] •‘The planning, procurement, and deployment of generative AI in … courts must firmly uphold the fundamental principle of judicial independence, encompassing its individual and institutional dimensions.’[123] |

|

Transparency and open justice |

•‘ensure transparency and accountability in the design, development, procurement, deployment, and ongoing monitoring of AI in a manner that respects and strengthens public trust. When using AI tools to create content, agency external facing services or dataset inputs or outputs shall disclose the use of AI.’[124] •Discussion on disclosure is provided from paragraph [9.113]. |

|

Contestability and procedural fairness |

•Court/tribunal use of AI ‘shall be documented in ways that ensure the technology is understood by those that make decisions, monitor outcomes, or explain results.’[125] •‘Any AI tool used in court applications must be able to provide understandable explanations for their decision-making output.’[126] •This is discussed further from paragraph [9.153]. |

|

Privacy and data security |

•‘Respect data privacy: Be vigilant about confidentiality and data privacy. Remember that information input into AI systems is outside the court’s secure network and may be exposed to the public.’[127] •‘Authorized Users may not input any Non-Public Information into Non-Approved GenAI.’[128] •‘Employees and affiliated entities must not use LLMs [large language models] in any way that infringes copyrights or on the intellectual property rights of others’.[129] •‘Should any problems arise related to the use of generative AI, such as unauthorized access or misuse of sensitive, confidential, or privacy restricted information, users must alert the Help Desk and their supervisor immediately.’[130] |

|

Access to justice |

•‘Serving the public fairly and effectively should guide all decisions related to the use of AI. Consider all potential users of the tool and incorporate their needs into its design, implementation, and monitoring.’[131] |

|

Efficiency and effectiveness |

•‘AI will not be the appropriate solution to every problem and should not be used simply because it is new, exciting, or available. Possible use of AI should be founded on identifying the problem and assessing possible solutions – including other technologies or non-technological approaches, rather than simply integrating AI into ineffective processes.’[132] •‘The use of AI tools shall be to enhance and improve the value added by our Judicial Branch employees’.[133] |

|

Human oversight and monitoring |

•‘Review of AI output through competent human oversight is important at all stages for validating results and making any necessary corrections. The level of human oversight required will depend on various factors: For example, greater oversight may be required for tools not developed specifically for court or legal purposes. When developing tools for courts, greater oversight may be required in the early stages to evaluate accuracy.’[134] |

|

Recommendation 19.An AI policy for Court Services Victoria staff and court and tribunal staff should include the Commission’s principles on the safe and acceptable use of AI. |

Disclosure of AI use by Victoria’s courts and VCAT

9.113Clearly communicating use of AI by courts and tribunals is critical to upholding transparency and open justice and can support public trust in the administration of justice. Public confidence in the courts depends on what the public knows about how the courts use AI.[135]

9.114Many court users expected courts and tribunals to disclose and consult on their use of AI. A sample of stakeholder views is illustrated in Table 22.

Table 22: Stakeholder views on disclosure of court/tribunal use of AI

|

Stakeholder |

Views on disclosure of AI use by courts and tribunals |

|---|---|

|

Victoria Legal Aid |

‘Consistent with human rights and a client-centred approach, we consider that targeted consultation on AI adoption is vital to foster trust and respect in the justice system. In particular, consultation should occur with groups which represent the diversity of our community and those who engage with the system’.[136] |

|

Northern Community Legal Centre |

‘If further technological reforms are to be introduced, court users should be included in consultation processes prior to implementation as well as during regular monitoring activities.’[137] |

|

Law Institute of Victoria |

‘courts and tribunals should engage in public consultations before implementing AI tools. Courts and tribunals should also disclose AI use to all court users … Public consultation would allow affected groups to express concerns and would ensure that AI implementation aligns with community expectations for fairness and transparency.’[138] |

|

Federation of Community Legal Centres and Justice Connect |

‘Courts and tribunals should ensure processes and decisions supported by AI systems are transparent to court users.’[139] |

|

Centre for the Future of the Legal Profession and UNSW Law and Justice |

‘it is imperative to disclose when AI is being used in a human process within courts or tribunals, which are rule of law promoting institutions.[140] |

9.116Court users expressed strong support for disclosure by courts. But there were mixed views among courts on whether disclosure was necessary. Representatives of the Supreme Court stated:

The use of AI in administrative processes does not need to be disclosed. The administrative process for listing matters is not disclosed now and AI does not change that. These processes can be a mix of judicial and administrative actions.[141]

9.117In contrast, representatives of the Coroners Court provided in-principle support for disclosure where AI is used by court staff and judicial officers. But they noted whether disclosure is necessary may depend on the type of AI tool being used.[142]

9.118Some discussion focused on what sorts of uses would require disclosure. Some stakeholders said that AI uses by courts and tribunals that were merely administrative would not need to be disclosed.[143] In Connecticut, the judicial branch is required to publish an inventory of AI tools. But this does not include products embedded in other systems that pose minimal risks, such as autocomplete functions in email.[144]

9.119Another approach taken by some jurisdictions is to limit disclosure to publicly facing tools and distinguish between AI tools used in administrative versus adjudicative roles. In California there is a mandatory requirement for court staff using GenAI for any purpose, and judicial officers using GenAI for tasks outside their adjudicative role, to disclose:

the use of or reliance on generative AI if the final version of a written, visual, or audio work provided to the public consists entirely of generative AI outputs.[145]

9.120There is also a discretionary obligation to consider disclosure if judicial officers use GenAI within their adjudicative role to create content provided to the public. But it is acknowledged that basing disclosure on whether a judicial officer has used AI in their adjudicative role ‘could create difficulties for courts’.[146]

9.121Disclosure is critical because there is currently a high level of distrust toward AI systems in Australia. A 2025 study by the University of Melbourne and KPMG ranked Australia as one of the lowest countries in the word for trust and acceptance of AI.[147] Only 36 per cent of Australians are willing to trust AI systems.[148]

9.122Not only are there high levels of distrust towards AI, but trust in public institutions across Australia has been falling and courts are not immune to that trend.[149] Professor Gabrielle Appleby has warned that:

In the judicial sphere, the trust that might have been previously reposed in exclusive judicial self-regulation, characterised by informality and opaqueness, no longer exists, or at least, is no longer sufficient.[150]

9.123Disclosure of AI tools used in judicial systems has received international support. The UN Special Rapporteur on the independence of judges and lawyers recommended that ‘key information about judicial AI systems be made publicly available, to permit legal challenges and oversight by civil society.’[151]

9.124If courts want to leverage AI to deliver court services, they need to build public confidence. One report suggested that ‘people are more likely to trust AI systems when they believe they understand AI and when and how it is used in common applications and have received AI education or training.’[152] The principles of contestability, transparency and accountability depend upon identifying the appropriate level of disclosure.

Disclosure in an AI inventory for Victoria’s courts and VCAT

9.125At a time when AI technology is still rapidly evolving, it is recommended that all AI tools procured, deployed or developed by Victoria’s courts and VCAT should be publicly disclosed in an AI inventory.

9.126This should include disclosure of any AI tool which is made available to CSV or court and tribunal staff or judicial officers. Disclosure should be aimed at an organisational level to capture tools that have been implemented in Victoria’s courts and VCAT for administrative purposes, such as transcription and translation tools. It should also include AI tools made available to judicial officers, such as legal research tools, although this does not require individual judicial officers or court staff to disclose every individual use of AI (see the discussion on judicial officer disclosure in Chapter 8).

9.127While this may result in the disclosure of low risk uses, transparency is necessary now to build public confidence in the use of AI tools by Victoria’s courts and VCAT. Representatives of the Public Record Office Victoria stated that:

We are in a rapidly changing moment with the introduction of AI. It is better for tighter regulations on AI use until things become better understood and practices become more managed … in terms of governance, we suggest erring on the side of caution including about what you disclose, at least for some time.[153]

9.128This was supported by other stakeholders who echoed that disclosure ‘should apply even if the process in question is in a seemingly mundane area (such as filing) and its use is for administrative rather than judicial purposes’.[154]

9.129It is recognised that there may be difficulties in identifying where AI has been incorporated into existing products because of the growth of embedded AI (discussed in Chapter 3). To make a meaningful disclosure, Victoria’s courts and tribunals should take reasonable steps to identify whether new software or technology employs AI. Some stakeholders suggested that this disclosure could involve ‘identifying the type of system and where it is being deployed’.[155]

9.130Representatives of the County Court considered several ways courts and tribunals could publish information about AI tools. For example:

•court or tribunal websites

•court or tribunal annual reports

•CSV’s annual report if systems are made available across multiple jurisdictions.[156]

9.131As an example, the Coroners Court has published information on its website about the AI pilot program.[157] Representatives of the Public Record Office Victoria were also supportive of Victoria’s courts and VCAT developing an AI inventory or register and highlighted that it should be actively maintained and publicly accessible.[158]

9.132At a minimum CSV should coordinate the publishing of an AI inventory that captures tools used by all of Victoria’s courts and VCAT. Having a coordinated list will help to identify any duplication of AI tools and opportunities for consistency. This will also assist in the identification of potential risks across CSV, courts and tribunals. Individual courts may also choose to publish information on their website or in their annual reports.

9.133As we highlight at paragraph [9.117], an example of how courts can publish information on AI usage would be the Judicial Branch of the State of Connecticut which conducts an annual inventory of all systems used by the Judicial Branch. This inventory is published on their website.[159]

9.134It is important for an AI inventory to be regularly updated to ensure it is accurate and comprehensive. The process of courts and tribunals undertaking a regular stocktake serves an equally important function by raising awareness about the status and availability of AI systems within Victoria’s courts and VCAT. It is recommended that the AI inventory be updated annually.

|

Recommendation 20.Court Services Victoria should coordinate an AI inventory, reasonably identifying AI tools designed, developed, deployed or procured by Court Services Victoria, Victoria’s courts and VCAT, which should be published and updated annually. |

Community consultation on AI use by Victoria’s courts and VCAT

9.135In addition to disclosure, some stakeholders supported Victoria’s courts and VCAT undertaking community consultation on AI tools.

9.136Consultation is a key feature of international court issued AI policies. In Canada guidance provided to courts emphasises that consultation with the community is critical to planning and assessing the need for, and feasibility of, any AI system.[160]

9.137We heard from human rights groups that community consultation was integral to ensuring that AI use in courts and VCAT aligned with a human rights approach. The Human Rights Law Centre recommended that:

Civil society, legal professionals, and affected communities must be regularly consulted on the use of AI systems in Victoria’s courts to ensure these systems reflect the needs and expectations of Victorians.[161]

9.138This was supported by community legal centre representatives who said there was value in courts and tribunals conducting consultations and user testing.[162] Additionally, the Office of the Victorian Information Commissioner strongly agreed that ‘courts and tribunals should consult with the public before using AI’.[163]

9.139Some court users raised concerns about inadequate consultation when new technologies had previously been implemented in Victoria’s courts and VCAT. The Northern Community Legal Centre shared that:

a recent 2024 review of pre-court information forms by the Magistrates’ Court of Victoria provided Northern CLC with a window of only 48 hours to provide a written submission based upon our research with court service users. It is not apparent if courts have tested the accessibility and useability of their online forms with court service users, and particularly those from marginalised cohorts that are more likely to experience difficulties.[164]

9.140Some courts recognised the importance of consultation when introducing AI tools. The County Court stated, ‘Consultation with key stakeholders will also assist in determining when and how the Court uses AI.’[165]

9.141The importance of consultation has been recognised internationally. The UN Special Rapporteur on the independence of judges and lawyers recommended that when considering AI tools, judiciaries should engage in multistakeholder consultations.[166]

9.142As discussed in Chapter 6, consultation with the legal profession and court users, particularly those from marginalised or disadvantaged groups, is critical to implementing the principles of transparency and open justice, impartiality and fairness, and efficiency and effectiveness.

9.143Stakeholders highlighted that when consultation may be necessary ‘will depend on the intended use of any AI applications and the associated risks’.[167] Not every use of AI will require consultation. The Supreme Court considered lower risk AI uses may not always require consultation:

in relation to AI that generates backgrounds and cancels out noise in virtual hearings, it would not appear to be necessary for the Court to understand the data that the AI was trained on, to disclose to or consult court users on the use of the AI.[168]

9.144Representatives of the Judicial College of Victoria considered that the threshold to consult should be high, for instance where the AI tool may impact people’s liberty.[169] They also raised concerns about placing mandatory obligations to consult on courts:

Courts are different to regulatory agencies and consultation obligations do not fit well with courts. A moral obligation to consult exists. But a duty to consult is worrying from the perspective of needing to preserve courts’ independence.[170]

9.145To determine when consultation may be necessary, we heard that Victoria’s courts and VCAT could build consultation into risk assessments.[171] A proposed AI assurance framework to support Victoria’s courts and VCAT to assess risks of AI use cases is discussed below (from paragraph [9.183]) it contains considerations for consultation across the AI lifecycle.

9.146How courts and tribunals should consult is dependent on the AI use case being considered. Stakeholders discussed a variety of possible consultation approaches. The Castan Centre recommended the establishment of a standing consultation forum:

it is significantly harder for individuals involved in courts and tribunals and grassroots organisations who do not necessarily consider themselves as part of the established justice landscape to be empowered and included within reforms. Therefore, deliberate efforts need to be made to seek out and hear from these voices. This kind of consumer and community engagement is standard practice in health services research and system evaluation, and consistent with a human rights-based approach.[172]

9.147Standing court user groups are already being used in some of Victoria’s courts. Representatives of the Coroners Court told us that they have a court user group. They considered that AI could become a standing item on the agenda for that group.[173]

9.148Other legal organisations have implemented standing advisory groups which include membership of people with lived experience. Victoria Legal Aid’s Data and Digital Ethics and Human Rights Advisory Group is used to review projects to

ensure they meet ethical and human rights obligations and that there are controls in place, to detect things like bias or identify when and how to consult with impacted communities.[174]

9.149Consultation and user testing is also a feature of international court and tribunal policies.[175]

9.150Victoria’s courts and VCAT should consider what consultation mechanism is most appropriate based on the AI use case being considered. Input from a range of relevant stakeholders with diverse backgrounds should be considered. This includes ‘court users from marginalised backgrounds and the services who work with them’.[176]

9.151We also heard that when testing AI tools there should be a focus on including marginalised individuals and groups. As an example, representatives from the Victorian Advocacy League for Individuals with Disabilities stated that where relevant ‘AI tools should be trialled with people with strong to moderate intellectual disability or people with short-term memory issues’.[177]

9.152We heard that there is value in ongoing user testing and in providing avenues for court users to give ongoing feedback. The Federation of Community Legal Centres and Justice Connect recommended that:

user feedback should be incorporated to continuously improve AI tools over time. Allowing the public to report issues or inaccuracies with AI-generated advice will ensure that the system can adapt to users’ needs and enhance its reliability.[178]

9.153Victoria’s courts and VCAT should consider implementing processes to ensure there is an accessible avenue for court users to provide meaningful feedback on their experience of AI systems.

|

Recommendation 21.Victoria’s courts and VCAT should consult with people likely affected by AI tools based on the AI assurance framework (set out in recommendation 23). Consultation should occur before implementing AI tools and throughout the AI lifecycle. |

Notification and human oversight considerations for AI decision making

9.154In Chapter 6 we identified considerations for courts and tribunals to ensure that the incorporation of AI does not undermine people’s rights to challenge decisions.

9.155These considerations include:

•notifying people whose rights are significantly affected by a decision made or materially influenced by AI

•ensuring there is human oversight of decisions made or materially influenced by AI.

9.156Each of these are discussed below.

Notification and explanation of AI use

9.157Victoria’s courts and VCAT should notify people whose rights are significantly affected by a decision made or materially influenced by AI.

9.158This notification needs to be clear and understandable. Australia’s National framework for the assurance of artificial intelligence in government, while not specific to courts, provides useful direction. It advises that governments should disclose the use of AI to people who may be impacted and provide clear and simple explanations for how an AI system reaches an outcome.[179] Information should also be tailored to the intended audience so that it is understandable.

9.159However, as we discuss in Chapter 3, complexity and proprietary considerations can limit and even prevent the explainability of AI tools. Representatives of the Monash Digital Law Group stated that while ‘it seems on the face of it to be a reasonable request that a human explain’ how an AI decision is made, ‘it is increasingly difficult to do so’.[180]

9.160Despite the opacity of AI tools, the Gradient Institute and the CSIRO have stated that to implement the Australian Government’s AI Ethics Principle of contestability it is ‘essential to provide impacted individuals with an adequate understanding of how the system decided their outcome and what data the decision was based on so that they have grounds to contest’.[181]

9.161To enable Victoria’s court’s and VCAT to provide clear and understandable information about AI decisions, consideration should be given to the explainability of AI tools during their design or procurement.

9.162The UNESCO draft AI guidelines recommend courts adopt ‘AI systems that are transparent in terms of how the system was developed, how it operates, its training data, its limitations (its margin of error), its capabilities, and the purpose of the systems’.[182]

9.163Relevantly, the Australian Human Rights Commission has recommended that the:

Australian Government should not make administrative decisions, including through the use of automation or artificial intelligence, if the decision maker cannot generate reasons or a technical explanation for an affected person.[183]

9.164When making decisions about future AI use cases, Victoria’s courts and VCAT should exercise caution and avoid using tools where there are technical complexities or proprietary constraints which could prevent them from being able to provide understandable explanations for decisions.

9.165Victoria’s courts and VCAT should seek to obtain information about how an AI tool was developed and how it works before implementation and should preference systems that can generate reasons or a technical explanation for decisions.

9.166Contestability and procedural fairness are considered as part of the proposed AI assurance framework for Victoria’s courts and VCAT discussed from paragraph [9.183].

Human oversight of AI decisions by courts and tribunals

9.167Victoria’s courts and VCAT should retain human oversight of decisions made or materially influenced by AI.

9.168The Centre for the Future of the Legal Profession and UNSW Law and Justice stated that human scrutiny and oversight of AI decisions is necessary to provide reassurance to members of the public and to support trust in the rule of law.[184] The Coroners Court similarly stated ‘Human oversight remains a critical component of using emerging technologies.’[185]

9.169In Canada, the Office of the Commissioner for Federal Judicial Affairs stated ‘Human oversight of AI is essential… at all stages for validating results and making any necessary corrections’.[186]

9.170However, the level of human oversight required is context dependent. Necessary human oversight may be informed by the type of tool used, the particular use to which it is applied and the stage of the AI lifecycle. For example, greater oversight may be needed when a tool is at the early stages of development.

9.171The Australian Human Rights Commission has advised that:

There is an important role for people in overseeing, monitoring and intervening in AI-informed decision making. Human involvement is especially important to:

•review individual decisions, especially to correct for errors at the individual level

•oversee the operation of an AI-informed decision-making system to ensure the system is operating effectively as a whole.[187]

9.172Victoria’s courts and VCAT should ensure that the introduction of AI does not prevent people significantly affected by a decision made or materially influenced by AI from seeking human intervention. Courts and tribunals should make it clear who is responsible for AI decisions so that people can access human-to-human interventions. This will require Victoria’s courts and VCAT to designate individuals with clear responsibility for AI decisions.

|

Recommendation 22.Victoria’s courts and VCAT should: a.notify people whose rights are significantly affected by a decision made or materially influenced by AI. Notification should include clear and understandable information on how the decision was made. b.ensure there is human oversight of decisions made or materially influenced by AI. The extent of oversight will depend on the context. |

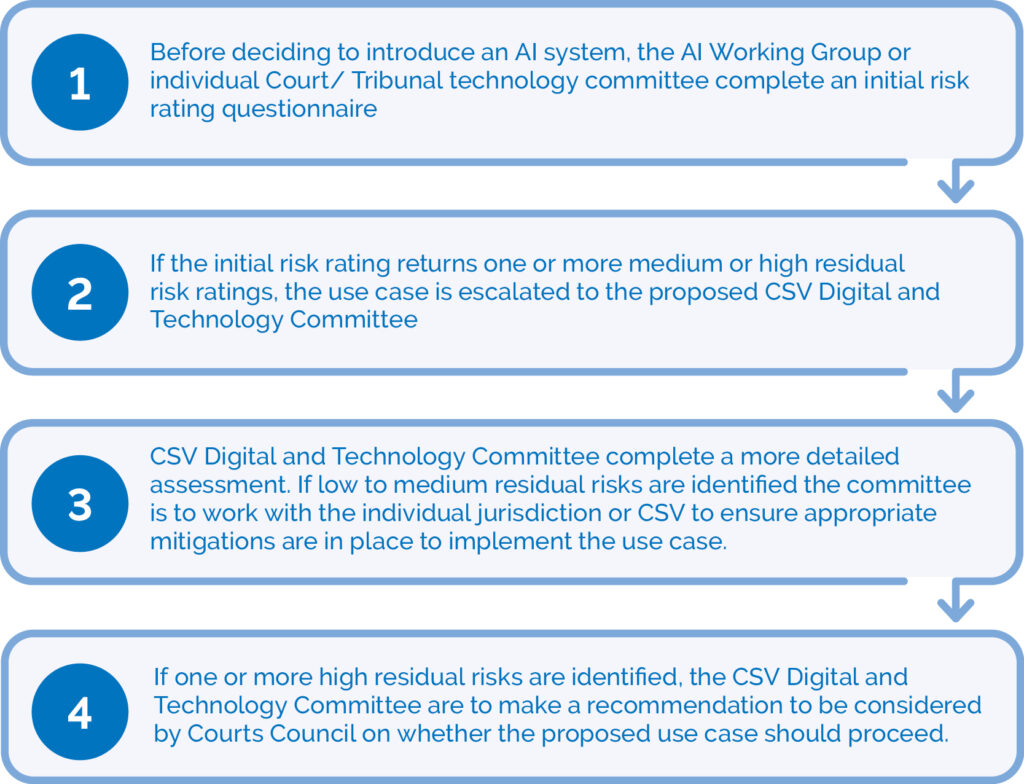

Developing an AI assurance framework

9.173Our terms of reference ask us to consider principles or guidelines that can be used in the future to assess the suitability of new AI systems in Victoria’s courts and tribunals.

9.174Assurance frameworks can provide a structured process for assessing risks and the suitability of an AI system. Assurance frameworks can guide decision making at different project phases to support:

•procurement

•design and development

•data collection and training

•deployment and use

•monitoring and evaluation.

9.175Examples of AI assurance frameworks range from general to court-specific frameworks. While these frameworks vary in content, important common elements include:

•Identifying risks, benefits and purpose: Often a series of questions are included to prompt users to identify risks. Many frameworks require users to identify the purpose and benefit to be delivered by the AI use case. Having a clear purpose can help ensure the adoption of AI tools leads to the realisation of benefits through improved processes or outcomes.

•Categorising and evaluating risks: AI assurance frameworks categorise risks in different ways. The level of risk can help determine what mitigations are required. Some frameworks assign a risk category (for instance low, medium or high) to specific AI use cases. For example, facial recognition tools may be categorised as high risk. These approaches are simple to apply and provide certainty but remove discretion. Use cases can also become quickly outdated. Other frameworks generate risk ratings by using a risk matrix to assess the likelihood and consequence of risks to principles. This allows decision makers to retain a high level of discretion and is more flexibly applied to emerging technologies. But it can be complex and requires interpretation, which can create inconsistency.

•Treating risks: Once risks have been identified and categorised, some frameworks require users to develop a mitigation plan. An organisation’s risk tolerance will inform what action is required for each risk category.

•Monitoring and review: Most frameworks require continuous monitoring and evaluation and encouraged users to regularly reassess risks.

•Assigning roles and responsibilities: AI assurance frameworks often encourage users to designate and document roles and responsibilities which can increase accountability and transparency.

AI assurance frameworks for courts and tribunals

9.176AI assurance frameworks developed to date are largely generic. Many have been developed by governments to apply across all agencies and to many contexts.

9.177AI assessment frameworks have been developed for use by the Australian, New South Wales and Queensland governments.[188] The Commonwealth Scientific and Industrial Research Organisation’s Responsible AI Pattern Catalogue also includes direction for organisations to conduct responsible AI risk assessments.[189]

9.178We also heard that private companies like Microsoft have published AI impact assessment templates.[190]

9.179However, some organisations are adapting generic frameworks to better suit their specific needs. We heard that the Office of Public Prosecutions (OPP) is developing an AI Principles Framework and accompanying roadmap to guide and govern the implementation of AI which will align with the Victorian and Australian government AI guidance. It will also consider the specific operating context for the OPP ‘to reflect court and community expectations around AI’.[191]